In early 2023, the internet couldn’t stop laughing at a bizarre AI-generated clip of Will Smith awkwardly eating spaghetti. The video looked strange, glitchy, and obviously fake. It became a running joke, and an informal benchmark developers used to show how fast generative AI was improving.

Just two years later, those same experiments look almost indistinguishable from real footage.

For businesses, that progress has a serious downside. Criminals are adopting the technology just as quickly. And the numbers show how fast the threat is growing. Deepfake fraud attempts have increased by more than 2,000% in the past three years, and in some sectors they now account for about 6.5% of all fraud attacks.

HyperVerge data from February 2026 shows how serious the problem has become. In a single week of onboarding telemetry, 1 in every 72 users was found to be committing fraud via automated, repeatable injection attacks. These weren’t amateur attempts. They were sophisticated deepfakes designed to bypass liveness checks at scale, representing a 1.38% confirmed fraud rate across tens of thousands of interactions.

For modern enterprises, this proves that deepfakes are a high-frequency, industrial-scale operational threat. In this guide, we’ll explain what a deepfake is and how they work, why attackers use them, and how businesses can detect and prevent them.

TL;DR

- Deepfakes now operate at a massive scale, and AI can generate faces, voices, and videos realistic enough to fool both people and traditional verification systems.

- As this technology evolves, fraudsters increasingly target businesses through onboarding, payments, hiring, and even executive communication. They use generative AI technologies such as GANs, autoencoders, and diffusion models to create these convincing deepfakes.

- In many cases, fraud rings now deploy them during live video calls, which creates serious risks for KYC checks, payment approvals, and remote verification processes.

- To defend against these threats, organizations must implement robust identity verification, certified liveness detection, employee training, and clear incident-response planning.

- Modern detection systems, such as AI-driven passive liveness and deepfake detection platforms like HyperVerge, can identify and stop these attacks within seconds.

What Is a Deepfake?

A deepfake is AI-generated or AI-manipulated media that makes it appear as if a person said or did something that never actually happened.

The term combines “deep learning” and “fake.” It emerged around 2017 when online communities began sharing videos created using machine learning models that could realistically swap faces or replicate voices.

Compared to traditional photo editing, deepfakes rely on advanced AI systems that learn patterns from large datasets of images, videos, or audio. These models can then generate new content that closely imitates a real person’s appearance or speech.

What began as an experimental internet trend has quickly evolved into a serious cybersecurity concern. As generative AI tools become more accessible, deepfakes are increasingly used in fraud, identity impersonation, misinformation campaigns, and social engineering attacks.

📍For a deeper breakdown of examples, risks, and real-world use cases, see our full guide on what a deepfake is.

Deepfake vs. Shallowfake: What’s the Difference?

When someone alters media to deceive, not all fakes are the same. Some fakes come from complex AI systems, and others are made with simple editing tools. The first type is called a deepfake, and the second is called a shallowfake.

A deepfake uses machine learning (ML) and deep learning models to generate or manipulate photos, videos, or audio so it looks like a real person said or did something they never did. A shallowfake changes real media using basic tools like cutting, cropping, or splicing clips without any AI.

However, both can be misleading. The key difference is the technology used to create them.

| Feature | Deepfakes | Shallowfakes |

| How they are created | Generated or manipulated using AI and deep learning models | Edited using basic video, image, or audio editing tools |

| Level of realism | Often highly realistic and difficult to detect | Usually easier to identify with careful review |

| Technical skill required | Requires AI tools and training data | Requires basic editing software |

| Common examples | Face swaps, AI-generated speech, and synthetic video | Cropped clips, misleading edits, altered captions |

As you can see, shallowfakes and deepfakes need different ways to catch them. Shallowfakes are usually easy to spot with simple checks like verifying context, running a reverse image search, or tracking down the original source. Deepfakes are harder because the content is entirely generated by AI and may not show any obvious edits.

For businesses, this difference is critical when choosing fraud prevention tools. Traditional verification methods can detect simple manipulations, but AI-generated deepfakes require more advanced techniques such as liveness checks, behavioral analysis, and AI-driven media forensics.

How are Deepfakes Created?

Attackers rely on several AI techniques to create deepfake media. Each method uses machine learning models that learn patterns from large datasets.

Here’s a fast rundown before we get into the details:

| Technology | How It Works | Typical Use |

| GAN | Two networks train against each other to create and evaluate images | Early deepfake visuals |

| Autoencoder | Learns to compress and rebuild faces for swapping | Face swap deepfakes |

| Diffusion Model | Converts noise to structured imagery through iterative refinement | Higher‑quality deepfakes |

| Voice Clone | Learns speech patterns and generates new audio | Deepfake audio |

With a rough idea of how deepfakes are made, we can now dive deeper into each technique:

Generative adversarial networks (GANs)

One of the earliest ways to generate synthetic media was with generative adversarial networks. In a GAN, two neural networks work together as a team and a challenger.

One network creates fake images or frames, and the other network tries to tell if they are real. Over many rounds of training, the creator gets better, and the evaluator gets sharper. This back‑and‑forth makes the results look more convincing with each cycle.

Autoencoders and face‑swapping

Another common method for face swapping uses autoencoders. These models learn to compress a face into a simpler format and then recreate it.

To deepfake a video, the model learns two faces, the original and the target, and then swaps one for the other while preserving realistic expressions and motion. Autoencoders focus on rebuilding what they have seen from data rather than imagining new content, so they often produce consistent swaps.

Diffusion model deepfakes: The 2024–2025 shift

In recent years, diffusion models have become more popular for generating high‑quality synthetic media. Instead of two competing networks, diffusion models learn to turn noise into a clear image by gradually refining it step by step.

These models have helped raise the realism of faces and full scenes in deepfake content beyond what earlier techniques could do.

Audio and voice clone deepfakes

Deepfakes are not just visual. AI can now clone voices so well that a synthesized voice can read new lines that the real person never spoke. Algorithms learn patterns of a person’s speech from recordings and then generate new audio that mimics tone, cadence, and emotion.

These techniques power voice cloning and synthetic speech generation that can be hard to tell apart from the original.

Types of Deepfakes

Deepfakes don’t all look or behave the same. Some mimic how people move and talk, while others focus on still images or real‑time interaction. Each type poses a different kind of risk, and attackers often combine them in creative ways.

In fact, surveys show that nearly half (46%) of all deepfake incidents involve video content, with image and audio deepfakes also rising rapidly.

- Video deepfakes: These are manipulated videos that make it look like a real person is saying or doing something they never did. Attackers use these in scams, misinformation, and social engineering because humans struggle to tell the difference from real footage.

- Image deepfakes: Image deepfakes change or create photos to insert people into scenes that never existed. These fakes range from realistic portrait swaps to fabricated events or endorsements. Because images spread quickly on social networks and lack motion cues, they can easily mislead viewers at a glance.

- Audio/voice clone deepfakes: Audio deepfakes use AI to mimic a person’s voice so convincingly that synthesized speech can fool people and machines alike. With as little as a few seconds of source audio, attackers can produce a voice clone that sounds authentic, and these are often used in phishing, vishing, or fraud schemes.

- Real‑time/live deepfakes: Real‑time deepfakes are the newest and fastest‑growing threat. These technologies generate synthetic video or audio during live calls or interactions, making it much harder to spot manipulation before it’s too late. Because live detection is difficult, businesses and platforms are racing to build real‑time defenses that can keep up.

How Deepfakes Threaten Businesses

Deepfakes are no longer a fringe tech joke. They have become a real threat to the company’s security, money, and trust.

In fact, deepfake‑related fraud losses worldwide reached nearly $900 million in 2025, with almost $410 million stolen in the first half of the year alone. Losses from executive impersonation and related scams now make up a large share of that total, and the trend is only accelerating.

Here’s how it’s affecting businesses of all sizes:

Executive impersonation & BEC fraud

One of the most dangerous deepfake threats for companies is executive impersonation. In a high‑profile case in Hong Kong, fraudsters used a deepfake video conference to impersonate a company’s CFO and other leaders, convincing an employee to make transfers totaling about HK$200 million (about $25.6 million) before the fraud was discovered.

In another incident in 2025, a finance director at a multinational firm in Singapore joined what appeared to be a meeting with senior executives and was instructed to transfer about US$499,000 to a fraudster‑controlled account before responding to a second request that made him suspicious. The funds were later partly recovered.

These cases show how criminals combine AI‑generated video with social engineering to exploit trust and authority, and convince even experienced staff to move large sums of money.

Financial fraud & unauthorised transactions

Deepfakes also target financial systems directly. In many scams, fraudsters use convincing executive voices or faces to authorise wire transfers, release funds, or approve invoices that should never have been processed.

Because the media looks and sounds authentic, victims often believe the requests are legitimate until it’s too late. Recent industry reports show that attacks like these are now a significant (and growing) part of global financial fraud.

For example, criminals impersonated the CEO of Ferrari by cloning his voice and sending WhatsApp messages about a secret acquisition in 2024. The executive who received the call noticed subtle inconsistencies and verified the caller by asking a personal question only the real CEO would know, stopping the scam before any loss occurred.

Social engineering & credential theft

Beyond direct wire fraud, deepfakes help attackers steal credentials and break into systems. Attackers use cloned audio or synthetic identities to trick employees into giving up passwords, security MFA codes, or other access credentials.

Once inside, attackers can move laterally through systems, steal data, or implant backdoors while hiding behind convincing synthetic “proof” of legitimacy.

📌Interesting read: Top 10 Examples of Deepfakes Across the Internet

Industry-Specific Deepfake Threats

Deepfakes hit certain industries harder because those sectors rely on remote verification, rapid onboarding, and high‑value transactions that attackers can exploit.

In fact, synthetic identity fraud and deepfake attacks now make up 8.3% of suspicious digital account creations, with financial services and employment onboarding listed as top targets.

Fintech & banking

Fintech and banking firms depend on digital identity checks to open accounts and verify customers. Fraudsters are now using AI‑generated identities, fake selfies, and livestreamed deepfake videos to fool KYC (Know Your Customer) systems and bypass biometric checks just like a real person would.

This has led to a sharp rise in synthetic identity fraud, especially in digital lending and payment platforms. The result is unauthorised accounts, fraudulent transactions, and increased compliance risk for institutions that trusted traditional verification methods.

Crypto & web3

In the crypto and Web3 space, onboarding is especially vulnerable because exchanges and wallets often prioritise speed over deep identity verification. Deepfake technology can be used to pass facial recognition checks and trick platforms into accepting fake users.

Attackers can then take over accounts, move crypto assets, and launder funds before controls can catch up. The rise of deepfake scams tied to investments and trading schemes also shows how easily this technology can be woven into broader fraud campaigns.

Remote hiring

Deepfake risks extend beyond finance into hiring and corporate access.

In 2024 and into 2026, North Korean operatives have used AI tools like voice changers and face‑swapping software to impersonate remote IT candidates and win jobs at Western firms. They used those positions to send wages back to their government and, in some cases, attempted to exfiltrate sensitive data before being discovered.

This threat makes clear that job onboarding, document verification, and identity checks must include more than just a smooth video call.

Marketplaces & gig economy

In online marketplaces and gig platforms, fraudsters can use synthetic identities to create seller accounts, inflate reviews, or exploit refund and payout systems. In high‑volume environments with minimal manual oversight, such as logistics, a convincing deepfake profile can scale scams rapidly and drain marketplace trust.

Many of these scams start with AI‑generated portraits and lifelike videos to win buyer confidence.

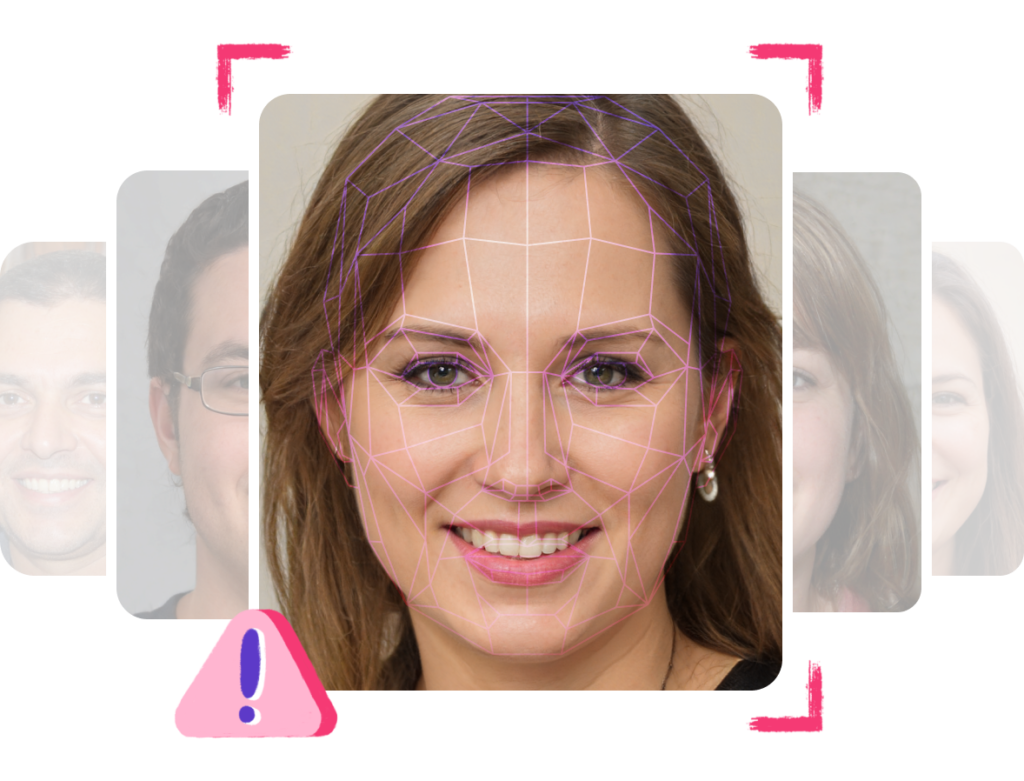

How to Spot a Deepfake

Even when an AI model creates realistic faces or voices, subtle visual and audio clues often give them away. In 2025, research found that human detection of high‑quality deepfake videos is still low, with experts reporting that fewer than one in four deepfake videos are spotted by people alone.

That means knowing what to look for matters more than ever.

Unnatural eye movement and blinking

Human eyes blink in unpredictable patterns, and they react naturally to light, emotion, and focus shifts. Deepfakes often miss these cues, showing blinks that are either too regular or too infrequent, or disconnected from natural reactions.

When the eyes don’t seem to track objects around them or reflexively blink during bright moments, that can be a strong sign the video isn’t real.

Facial boundary artifacts and blurring

Even high‑quality deepfakes can struggle at the edges of the face or where the face meets the background. You might see smudged lines, blurring around the jaw, or soft hair edges that shift oddly when the subject moves.

These small visual glitches happen because AI models often focus most on the center of the face and less on the fine details at the boundaries.

Audio‑visual desynchronisation

In a genuine video, lip movements and speech match perfectly. Deepfakes sometimes fall just a bit short of that reality.

If the voice arrives a split second before the lips move, or if fast speech sounds slightly mismatched, that could mean you’re watching AI‑generated content. Looking at those timing details closely often reveals inconsistencies that the model missed.

Inconsistent lighting and skin texture

Real lighting follows physical rules and affects skin texture in subtle ways. Deepfakes can get close, but they still make mistakes with shadows, brightness, and texture transitions.

You might notice shadows that don’t match the room, highlights that look too smooth, or skin that seems almost airbrushed compared to the neck and background. These lighting and texture clues are often the easiest giveaways on a quick look.

Spotting deepfakes in real‑time video calls

Spotting a deepfake in a recorded video is one thing, but live calls are harder.

Recent research into real‑time detection has found that analyzing eye‑blink and gaze patterns during a live session can still reveal unnatural behaviour because AI models struggle to mimic these human cues perfectly under live conditions.

Researchers are even developing tools that watch for those tiny anomalies to flag live deepfakes as they happen.

| 💡Pro Tip: Before you go on, test your skills in spotting subtle deepfake cues with the interactive HyperVerge Deepfake Game! |

Legal and Regulatory Landscape

As deepfakes have grown more realistic and more widely misused since 2024, lawmakers around the world have begun to catch up with the technology.

Many governments are now updating or creating laws to make sure AI‑generated content does not harm people, business systems, elections, or privacy.

India: MeitY Advisory, IT Act and Digital India Act (2025–2026)

In India, combating deepfakes started with advisories from the Ministry of Electronics and Information Technology (MeitY) that urged platforms to immediately address harmful synthetic media as early as 2023.

These efforts evolved into amendments to India’s Information Technology (Intermediary Guidelines and Digital Media Ethics Code) Rules, 2026, which officially regulate synthetically generated information such as deepfake audio, images, and video. Platforms must now clearly label AI‑generated content and quickly remove unlawful deepfakes under existing law. These rules came into force on February 20, 2026, and expand intermediary obligations under the IT Act, 2000, that already criminalise identity theft and deceptive communication.

India is also drafting a broader Digital India Act to replace the older IT framework and give a deeper legal footing to emerging tech regulation, including penalties and enforcement structures for misuse of AI.

EU AI Act Article 50: Labelling Mandate (Enforcement 2026)

In the European Union (EU), the AI Act, which became law in 2024, includes Article 50, which requires providers of generative AI systems to mark or label outputs such as images, video, and audio that are artificially generated or manipulated.

That means deepfakes must be clearly identifiable so users know they are not real. These transparency obligations are set to become enforceable in 2026, pushing companies to embed provenance tags or similar markers to show when media is synthetic.

The European Commission is also developing a Code of Practice to help implement these labelling and detection standards.

Global Overview: United States and United Kingdom

In the United States, the TAKE IT DOWN Act was signed into law in 2025. It makes the distribution of non‑consensual intimate deepfake imagery illegal and requires online platforms to remove such content within 48 hours of notice. The law aims to protect victims of deepfake abuse and impose accountability on both individuals and hosting services.

In the United Kingdom, the Online Safety Act 2023 already places obligations on platforms to block illegal and harmful content, including deepfakes used in abuse or fraud. Enforcement by the UK regulator Ofcom can carry heavy fines for non‑compliance. In 2026, the UK also criminalised creating non‑consensual sexual deepfakes, making them a priority offence under the Online Safety Act and reinforcing enforcement against the tools that enable this abuse.

These legal shifts show that regulators are defining clear rules on what deepfakes are, how they should be disclosed, and what platforms must do about them so that businesses, citizens, and victims of misuse all have legal protections and enforcement pathways. For businesses, this means implementing robust safeguards such as AML solutions and digital identity verification tools to detect fraud, verify users, and comply with regulations.

How to Protect Your Business from Deepfakes

Deepfakes are evolving fast, and so are the tactics criminals use to exploit them. Protecting your business requires a mix of technology, awareness, and well‑defined security practices.

Follow this step‑by‑step checklist to shield your company from deepfake risks.

1. Implement multilayer identity verification

Identity verification is your first line of defense. Use a multilayered approach combining biometric verification, document authentication, and multi-factor authentication.

This ensures that every account, login, or transaction is tied to a real person and reduces the risk of unauthorized access. Layered verification makes it much harder for attackers to bypass your systems with fake identities or deepfake media.

2. Verify identity at onboarding with certified liveness detection

When new users join your platform, don’t rely on static documents alone. Certified liveness detection confirms in real-time that a person is physically present during verification.

Single-image passive liveness checks, for example, require just a single selfie upload and nothing more. This makes onboarding smooth for users while drastically reducing the chance of deepfake or synthetic identity attacks.

3. Establish verbal code word protocols

For high‑risk transactions, implement verbal code word protocols. Ask employees, partners, or clients to provide pre-agreed code words during calls or meetings before sensitive actions are approved.

This simple practice can immediately flag an impersonation attempt, as deepfakes or voice clones won’t know the secret words. It’s a lightweight, human-centered safeguard that complements your AI defenses.

4. Train employees and partners

Even the best technology can fail if your team isn’t aware of the risks. Educate staff and partners on how to recognize suspicious communications, look for signs of deepfakes, and escalate incidents.

Regular training helps employees identify unnatural speech patterns, blurred edges in video, or mismatched lighting before damage occurs. Awareness and vigilance are as important as technical safeguards.

5. Develop and test an incident response plan

Prepare for the worst before it happens. Your plan should include steps for rapid identification, containment, and communication to stakeholders.

Define clear protocols for verifying content, sharing accurate information, and mitigating reputational or financial impact. Regularly test your response plan with simulated deepfake scenarios to ensure your team reacts quickly and effectively.

Staying connected with cybersecurity experts and industry associations keeps you informed about emerging deepfake threats.

How HyperVerge Detects Deepfakes

As deepfake technology advances, simple face matching isn’t enough. HyperVerge combines its facial recognition tools with liveness detection, spotting tiny cues that static images or AI-altered faces can’t mimic.

In independent benchmarks, HyperVerge’s deepfake and liveness detection tech is shown to deliver around 98.5% accuracy and detect deception in under 3 seconds, giving enterprises a strong balance of speed and security.

Passive liveness detection vs. active liveness: Why it matters in 2026

In the past, many systems used active liveness checks, in which a user is asked to perform a gesture, such as a blink, a smile, or a head tilt, to prove they’re alive. That method does a decent job, but it can frustrate users and slow down onboarding. It also doesn’t fully stop fake video attacks that mimic gestures.

HyperVerge leans on passive liveness detection, which means it verifies you without asking you to do anything extra beyond a normal selfie. The AI analyzes subtle details like skin texture, light reflections, and facial features to tell if a person is real. Passive liveness significantly smooths the user experience while still keeping deepfake attacks at bay.

Compared to active liveness, passive methods reduce friction, lower dropoff during sign‑up flows, and help businesses keep customers engaged. You can think of it as security that works with users, not around them.

Single‑image passive liveness: How it works

HyperVerge pushes passive liveness further by doing it with a single image. You don’t need to record a video or respond to prompts. Just snap a selfie, and the system instantly checks for signs of life.

Behind the scenes, machine learning models examine the photo for complex biometric cues that differentiate real faces from static images, videos, 3D masks, or deepfakes.

This single‑image approach helps in two big ways:

- First, it makes onboarding almost instant for users.

- Second, it keeps fraud rates low because the AI is trained on massive, diverse datasets.

HyperVerge has processed over 850 million liveness checks over the past 3 years with over 99.9% accuracy, showing how well the model performs in real-world environments.

iBeta Level 2 Certification: What it means for enterprise compliance

For a business relying on identity checks, certifications are equally important. HyperVerge’s passive liveness technology has achieved ISO 30107‑1/30107‑3 Level 2 compliance through rigorous testing by labs accredited to standards such as NIST. That level of certification means the system has been tested against advanced spoofing attempts, including sophisticated masks and deepfakes, and proven to hold up.

This level of compliance is a big deal for enterprise users who need to meet strict regulatory or security requirements. It signals to compliance teams that the tech is validated to industry‑recognized standards for presentation attack detection (PAD).

What Will Deepfakes Look Like in 5 Years?

By now, it’s clear that deepfake technology won’t just stay a niche tool for pranks and scams. It will become far more sophisticated, creating videos and voices that look and sound real to everyday people, and that will challenge how we trust digital media.

In fact, researchers see deepfakes moving into real‑time synthesis and interactive content, making them harder to spot with human judgment alone. As this shift happens, businesses offering facial recognition software and identity verification will need smarter detection and anti‑deepfake defenses baked into every process.

That’s where HyperVerge steps in with advanced face authentication and deepfake-detection software. Our technology has verified over 800 million identities across 195+ countries, helping companies secure access and build trust. Customers using HyperVerge see results quickly, with reduced drop‑off rates, twice as many frauds caught, 0.2-second authentication, and 95% straight‑through processing. If you want to stay ahead of deepfake threats and secure your identity flows, explore our solutions and book a demo today.