What if you couldn’t trust your own eyes anymore?

That question, once the stuff of science fiction, is now a daily reality. Deepfakes are AI-generated media that can clone a face, steal a voice, and fabricate entire conversations. Recently, deepfakes have crossed a threshold that researchers warned us about for years. Voice cloning has reached what experts call the “indistinguishable threshold,” where just a few seconds of audio can generate a convincing clone complete with natural intonation, rhythm, emotion, and even breathing noise.

The numbers tell a startling story. The volume of deepfakes has grown explosively, from roughly 500,000 online in 2023 to about 8 million by 2025, with annual growth nearing 900%. And the consequences are no longer just viral videos of celebrities. In February 2024, a finance worker was tricked into wiring $25 million after a deepfake video conference call, and losses from deepfake fraud in North America alone exceeded $200 million in just the first quarter of 2025.

What makes today’s deepfakes different is not just the scale, it’s the accessibility. Consumer tools have pushed the technical barrier almost to zero, meaning anyone can describe an idea, let an AI draft a script, and generate polished audio-visual media in minutes. Deepfakes are no longer the domain of nation-state hackers or Hollywood studios. They are a tool available to virtually anyone with a laptop and an agenda.

In this post, we’ve compiled the most striking, shocking, and instructive deepfake examples of our time. Whether you’re a curious reader, a cybersecurity professional, or someone who simply wants to stay one step ahead, understanding these cases is no longer optional. It’s essential.

Because in a world where seeing is no longer believing, knowledge might be your only defense.

What Is a Deepfake?

A deepfake is AI-generated or AI-manipulated media that convincingly replaces one person’s face, voice, or both, or fabricates someone’s likeness entirely. Unlike older editing techniques, deepfakes are generated by generative adversarial networks (GANs) or diffusion models, making them increasingly difficult to detect with the naked eye.

Modern deepfakes are used for political disinformation, financial fraud, identity theft, and non-consensual intimate content.

Real Deepfakes: Verified Incidents

Political Deepfakes

1. Wartime Disinformation: The Zelenskyy Surrender (2022)

- The Incident: A video circulated on social media appearing to show President Volodymyr Zelenskyy urging Ukrainian troops to lay down their arms.

- The Tech: A full AI Deepfake—one of the first high-profile uses of the tech to undermine military morale during active combat.

- The Outcome: Quickly debunked when Zelenskyy posted a live rebuttal within hours, though it had already spread widely.

2. The “Cheapfake” Prototype: Nancy Pelosi (2019)

- The Incident: Footage of then-House Speaker Nancy Pelosi was altered to make her appear intoxicated or slurring her speech.

- The Tech: A “Cheapfake”—this wasn’t AI-generated, but rather simple, slowed-down video footage.

- The Outcome: It served as a watershed moment for policy, garnering millions of views and forcing U.S. Congress to accelerate deepfake legislation.

3. Mass Electoral Interference: Indian General Election (2024)

- The Incident: Fabricated clips of major party leaders, including Narendra Modi and Rahul Gandhi, flooded platforms like WhatsApp and YouTube.

- The Tech: AI-Generated Deepfakes used as a standardized tool for narrative control.

- The Outcome: The Election Commission of India had to issue emergency directives, signaling that deepfakes are now a staple in modern electoral interference.

4. AI Audio Deception: U.S. Presidential Primary (2024)

- The Incident: New Hampshire voters received robocalls featuring a voice identical to President Joe Biden, falsely advising them not to vote in the primary.

- The Tech: AI Audio Cloning—proving that misinformation doesn’t need a visual component to be effective.

- The Outcome: Led to the first criminal charges in the U.S. specifically tied to AI-generated election interference.

Celebrity Deepfakes

5. Non-Consensual Imagery: Taylor Swift (2024)

- The Incident: Sexually explicit, AI-generated images of the singer went viral on X (formerly Twitter), garnering tens of millions of views in hours.

- The Tech: Generative AI Imagery (Stable Diffusion/Midjourney style), specifically used for Non-Consensual AI-Generated Intimate Imagery (NCII).

- The Outcome: Triggered a massive public outcry and became the primary catalyst for U.S. federal legislation (like the DEFIANCE Act) to protect victims of AI-generated “revenge porn.”

6. Unauthorized Endorsements: Tom Hanks Dental Ad (2023)

- The Incident: An AI-generated version of Tom Hanks appeared in a video promoting a dental insurance plan he had never heard of.

- The Tech: Visual Likeness Deepfake, mapping the actor’s face and voice onto a promotional script.

- The Outcome: Hanks issued a public warning on Instagram, sparking a global debate over “Right of Publicity”—the legal right of an individual to control the commercial use of their identity.

7. Financial Fraud: The “Celeb-Bait” Crypto Scams

- The Incident: High-profile figures like Kim Kardashian and Elon Musk appeared in videos “guaranteeing” returns on specific cryptocurrency platforms.

- The Tech: Multi-Modal Deepfakes, often syncing real interview footage with AI-dubbed audio to make the scam look like a legitimate endorsement.

- The Outcome: These “celeb-bait” schemes have defrauded victims of millions of dollars globally, highlighting the difficulty of policing cross-border financial crimes.

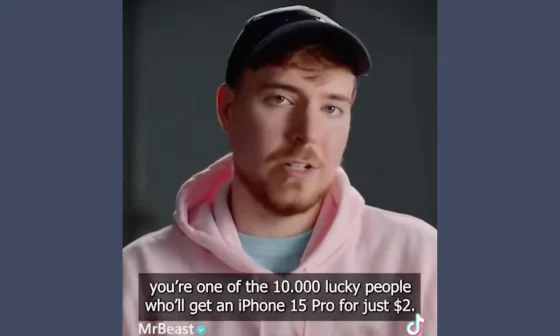

8. Platform Accountability: The Fake MrBeast Ad (2023)

- The Incident: A deepfake of YouTube star MrBeast appeared as a paid advertisement on TikTok, claiming to give away iPhones for $2.

- The Tech: AI Voice and Face Synthesis, optimized to bypass automated ad-review systems.

- The Outcome: Because the video was a paid ad, it raised serious questions about the legal liability of social media platforms for profiting from AI-generated fraudulent content.

Financial Fraud Deepfakes

9. Multi-Party Video Fraud: Hong Kong Arup Case (2024)

- The Incident: A finance employee at the engineering firm Arup was tricked into transferring $25.6 million after a video call.

- The Tech: Real-Time Multi-Persona Deepfake. The attackers didn’t just impersonate the CFO; they created AI versions of every other participant on the call to provide “social proof” and consensus.

- The Outcome: The largest single deepfake financial loss on record. It proved that “seeing is no longer believing,” even in a live, multi-person digital environment.

10. The Audio Pioneer: UK Energy Voice Clone (2019)

- The Incident: A UK CEO transferred $243,000 to a “supplier” after a phone call from his boss (the CEO of the German parent company).

- The Tech: AI Voice Cloning. This was the first major “vishing” (voice phishing) case where the AI captured the specific “melody” and German accent of the executive perfectly.

- The Outcome: A landmark case for the insurance industry (Euler Hermes), proving that audio-only deepfakes could bypass traditional verbal authorization protocols.

11. Organized Crime: Dubai Deepfake Syndicate (2023)

- The Incident: Dubai Police dismantled an international gang of 43 people that had stolen $36 million from two Asian companies.

- The Tech: Hybrid Deepfake/Hacking. The gang hacked corporate emails to monitor real deals, then used AI voice clones to call branch managers and “confirm” the fraudulent wire instructions.

- The Outcome: Resulted in “Operation Monopoly,” a global police effort involving Interpol. It showed that deepfakes are now a standard tool for large-scale, organized money-laundering syndicates.

12. Intercepted Recovery: Singapore CFO Scam (2025)

- The Incident: A finance director nearly lost $499,000 during a Zoom call featuring deepfakes of the CEO and a “lawyer” who forced the victim to sign a fake NDA.

- The Tech: Deepfake Video & Legal Impersonation. The scammers added a layer of fake “legal pressure” to discourage the victim from double-checking the request.

- The Outcome: A rare success story—Singapore’s Anti-Scam Centre coordinated with Hong Kong police to freeze the funds just days after the transfer, highlighting the need for rapid international banking cooperation.

13. Market Manipulation: Deepfake Investor Calls (2024)

- The Incident: Fraudsters used AI-generated audio of executives to “leak” false information during or around investor calls to manipulate stock prices (front-running).

- The Tech: Generative Audio Synthesis, designed to mimic the tone and technical jargon used by C-suite executives during earnings reports.

- The Outcome: Prompted a joint SEC and FINRA bulletin warning that deepfakes are now a threat to market integrity and a tool for “pump-and-dump” schemes.

Voice & Audio Deepfakes

15. The $35 Million Voice Heist: UAE Bank (2020)

- The Incident: A bank manager authorized $35 million in transfers after receiving a call from a “director” he recognized, claiming the funds were for a corporate acquisition.

- The Tech: Advanced Voice Synthesis paired with coordinated “legal” emails. The criminals simulated a trusted relationship to bypass security.

- The Outcome: A massive multi-jurisdictional investigation involving 17 suspects and banks across the globe. This remains a benchmark for how AI can turn a trusted voice into a multi-million dollar master key.

16. The Teams Meeting Trap: WPP CEO Mark Read (2024)

- The Incident: Fraudsters used a WhatsApp photo of CEO Mark Read to lure a senior executive into a Microsoft Teams call. During the call, they used a voice clone and YouTube footage to request the setup of a new business entity.

- The Tech: Vishing/Meeting Hijacking. By using a combination of a known photo, cloned audio, and “recycled” video, they tried to create a sense of executive urgency.

- The Outcome: Failed. The WPP team’s vigilance prevented any loss. Read later sent a company-wide warning: “Just because the account has my photo doesn’t mean it’s me.”

17. The Secret Acquisition: Ferrari CEO (2024)

- The Incident: Scammers targeted a Ferrari executive with a “hush-hush” acquisition story. They followed up with a call using a deepfake of CEO Benedetto Vigna—complete with his specific southern Italian accent.

- The Tech: High-Fidelity Audio Cloning. The attackers specifically tuned the AI to mimic Vigna’s unique regional speech patterns to increase authenticity.

- The Outcome: Prevented. The executive became suspicious of the “robotic” tone and asked a personal question only the real CEO could answer. The scammer hung up immediately.

18. Election Sabotage: Slovakia Audio Fake (2023)

- The Incident: Days before the election, a fake audio clip of opposition leader Michal Šimečka discussing how to “rig” the vote was leaked. Because it happened during a 48-hour media blackout, he couldn’t legally debunk it in time.

- The Tech: Strategic Audio Deepfake. Audio is easier to produce and harder for the average voter to verify than video, making it the perfect weapon for a timed “information strike.”

- The Outcome: The pro-Russia candidate won. Researchers noted that audio fakes are now the highest-impact threat to democracy due to their ease of creation and rapid “virality.”

KYC Bypass Deepfakes

19. The Death of the Physical ID: OnlyFake (2024)

- The Incident: An underground service called “OnlyFake” generated highly realistic, AI-synthesized photos of passports and driver’s licenses from 26 countries.

- The Tech: Neural Image Synthesis. Instead of “Photoshopping” an existing ID, the AI generated a brand-new, high-resolution image of a document from scratch, complete with realistic shadows, backgrounds, and “security features.”

- The Outcome: Successfully bypassed Know Your Customer (KYC) checks on major exchanges like OKX and Binance for just $15. It proved that automated document verification can no longer distinguish between a photograph of a real plastic card and a purely digital AI creation.

20. KYC-Bypass-as-a-Service: Underground Markets (2024)

- The Incident: Research by Trend Micro revealed a flourishing “service economy” on dark web forums where attackers can buy a guaranteed KYC bypass for as little as $30 to $200.

- The Tech: Industrialized Fraud Kits. These aren’t just single images; they are “packs” designed to defeat specific providers like Onfido, Jumio, and Sumsub.

- The Outcome: The democratization of fraud. High-level deepfake technology is now accessible to “low-skilled” attackers, making identity theft a cheap, on-demand commodity rather than a sophisticated state-level operation.

21. The “Injection” Revolution: Digital Identity Crisis (2023–2025)

- The Incident: Attackers shifted from “Presentation Attacks” (holding a mask to a camera) to “Injection Attacks”—bypassing the camera entirely to feed deepfake data directly into a system’s data stream.

- The Tech: Virtual Cameras & Face-Swap Injection. By intercepting the biometric pipeline, attackers saw a 704% increase in successful deepfake bypasses in 2023.

- The Outcome: An “existential crisis” for finance. With 88% of deepfake fraud targeting the crypto sector and an estimated $40 billion in projected losses by 2027, FinCEN and FS-ISAC have issued emergency alerts that traditional biometric “liveness” checks are failing.

India-Specific Deepfake Cases

Deepfake cases in India have surged by 550% since 2019, with projected losses reaching ₹70,000 crore in 2024 alone, according to a Pi-Labs report. India witnesses around 4,000 mule accounts identified daily, while nearly 65% of cyber-fraud incidents go unreported. A 2025 analysis found that 47% of Indian adults have either been victims of, or know someone who has been a victim of, an AI voice-cloning or deepfake scam — nearly double the global average of 25%. Of Indian victims of AI voice scams, 83% suffered monetary loss, with almost half losing over ₹50,000.

22. The Viral Catalyst: Rashmika Mandanna (2023)

- The Incident: A high-quality deepfake video of the Bollywood actor entering an elevator went viral, originally created by “face-swapping” her likeness onto another woman’s body.

- The Tech: Face-Swap Deepfake. While technically simple, its realism and the “viral speed” of Indian social media made it inescapable within hours.

- The Outcome: A turning point for Indian policy. It prompted Prime Minister Modi to label deepfakes a “threat to society,” leading to new IT Amendment Rules that force platforms to remove such content within 24 hours.

23. Trust as a Weapon: Ankur Warikoo Stock Scams (2024–2025)

- The Incident: Fraudsters created perfectly synced videos of finance influencer Ankur Warikoo “recommending” volatile stocks and private WhatsApp investment groups.

- The Tech: Synthesized Brand Cloning. The AI didn’t just copy his voice; it replicated his specific hand gestures, facial expressions, and “educational” tone to lower the victims’ psychological defenses.

- The Outcome: Highlighted the “Platform Lag” problem. Despite Warikoo using official Brand Rights tools, the content remained live long enough to cause significant financial losses, sparking a debate on whether platforms are doing enough to stop “indistinguishable” fraud.

24. A Legal Landmark: Arijit Singh v. Codible Ventures (2024)

- The Incident: AI tools were used to recreate singer Arijit Singh’s voice and likeness for unauthorized merchandise, domain names, and digital assets.

- The Tech: AI Voice & Personality Synthesis. The tech allowed creators to make “new” songs or endorsements in Singh’s signature style without his involvement.

- The Outcome: India’s first major AI personality ruling. The Bombay High Court ruled that a celebrity’s “personality attributes” (voice, name, likeness) are legally protectable. This set the standard for “Moral Rights” in the age of AI, preventing the unauthorized digital “cloning” of human talent.

AI Tools Used to Create Deepfakes

Modern deepfakes are produced using a range of commercially available and open-source tools. Understanding which AI creates deepfakes is part of building effective detection.

Face-swap tools: DeepFaceLab, Reface, FaceSwap (open-source) — used primarily for video manipulation.

Voice cloning tools: ElevenLabs, Resemble AI, and various open-source models like Tortoise-TTS — increasingly used in fraud schemes.

Full synthetic video generation: Sora (OpenAI), Runway Gen-3, Kling — capable of generating photorealistic video from text prompts alone, with no source media required.

The barrier to entry has fallen dramatically. Credible deepfakes that would have required significant computing resources in 2020 can now be generated in minutes on a consumer laptop.

Deepfake Examples in Banking & Finance

The financial sector is the most targeted industry for deepfake fraud. According to threat intelligence data, deepfake-related fraud attempts against financial institutions grew by over 700% between 2022 and 2024. The primary attack vectors are:

CEO fraud / BEC via deepfake video call: impersonating executives during video conferences to authorize payments (see Hong Kong example above).

Voice clone fraud: impersonating executives or clients over the phone to trigger wire transfers or account changes.

KYC bypass attacks: using synthetic or manipulated faces and voices to defeat identity verification during account opening or step-up authentication.

Investment scam baiting: using celebrity deepfakes in video ads to lure retail investors into fraudulent schemes.

HyperVerge’s deepfake detection is built specifically for financial services environments — detecting synthetic faces, injected video feeds, and voice anomalies in real-time during onboarding and authentication.

Read more: Deepfake detection for enterprises

How Are Deepfakes Detected?

After each of the cases above, investigators and platforms applied a combination of technical and contextual detection methods:

Forensic analysis — examining compression artifacts, inconsistent lighting, blinking patterns, and facial boundary anomalies. Early deepfakes had obvious tells (ear distortion, hair edges). Modern deepfakes are far harder to detect visually.

AI-based detection models — purpose-built classifiers trained on deepfake datasets. These include liveness detection (used in KYC), media forensics tools, and real-time video analysis.

Read more: How to spot a deepfake

Behavioral signals — inconsistencies in the subject’s speech rhythm, micro-expressions, and response latency that differ from authentic video.

Provenance verification — content credentials (C2PA standard) embedded at capture, allowing downstream verification of whether media has been altered.

How to Spot a Deepfake in 7 Signs

| Signal | What to Look For | |

| 1 | Facial boundary artifacts | Blurring or flickering at hairline, ears, or neck |

| 2 | Unnatural blinking | Too infrequent, too rapid, or asymmetric |

| 3 | Lighting inconsistency | Blurring or flickering at the hairline, ears, or neck |

| 4 | Mouth/audio sync | Lip movement slightly out of sync with speech |

| 5 | Skin texture | Overly smooth, waxy, or lacking natural variation |

| 6 | Eye movement | Glassy appearance; pupils don’t track naturally |

| 7 | Background distortion | Objects near the subject’s edges warp or blur |

Can you spot deepfakes reliably? Try out our Deepfake Game!

How Deepfakes Can Impact Your Business

Thanks to generative adversarial networks (GANs), deepfake technology can now create hyper-realistic digital faces, cloned voices, and manipulated videos that are nearly impossible to distinguish from real people.

While that’s amusing, such an advanced level of cloning presents a growing security risk for businesses, capable of fooling even the most (adjective) identity verification systems.

Many organizations rely on biometric authentication, video KYC, and voice recognition for secure customer onboarding and fraud prevention—but deepfakes can now bypass these safeguards with alarming accuracy.

| 🤯 Did You Know: A Wall Street Journal reporter used an AI voice clone to bypass Chase customer service and their automated biometric voice print security system as an experiment. The cloned voice passed authentication and was granted access to a live bank agent—proving that advanced verifications are needed to protect businesses against deepfake frauds. |

Key risks of deepfakes for businesses

Identity verification fraud

Fraudsters use deepfakes to create synthetic identities, impersonate real customers, and hijack existing accounts. This compromises the onboarding and KYC process exposing the business to the risk of facilitating financial crimes like money laundering.

| 🤯Did you know: Fraudsters use synthetic identities created using the social security numbers of deceased individuals to open new bank accounts, secure loans, and conduct money laundering endeavors? |

Fraudulent transactions

Fraudsters use deepfake voices, videos, and manipulated documents to impersonate executives or account holders. Businesses, especially banks, can approve fake wire transfers, fraudulent loan requests, and unauthorized payment changes, resulting in financial losses and compliance violations.

Data breaches

Fraudsters use deepfake images and voice clones to trick bank and FinTech employees into resetting credentials, approving unauthorized access, and exposing confidential data. If an employee fails to verify identity properly, it may result in security breaches, data leaks, and financial losses.

| 💡Pro Tip: Implement AI-powered deepfake detection and enforce strict multi-factor authentication (MFA) protocols to reduce the risk of social engineering attacks. |

Reputational damage

A single deepfake-driven fraud incident can lead to public backlash, loss of business, and long-term reputational harm. It raises doubts about the company’s security measures and ability to protect customers, risking their credibility.

Regulatory fines

Businesses, especially those regulated under KYC and AML requirements, face significant regulatory fines, legal action, and potential license suspension if they fail to prevent fraudulent accounts and detect illicit transactions.

Market manipulation

Fraudsters use deepfakes to spread false financial information and manipulate stock prices. Such instances are frequented in crypto markets where fake executive announcements and fabricated investor updates can drive price swings, panic selling, or artificial demand.

| Interesting case point: After the collapse of cryptocurrency exchange FTX, the deepfake video of its CEO Sam Bankman-Fried (SBF) circulated on Twitter, offering “compensation” for users in an attempt to steal their funds. |

Read now: 5 Best Deepfake Detection Tools

Protecting Your Business from Deepfakes

The rapid advancements in AI and machine learning promise a future where the deepfakes will be flawless. And, while that’s still a rarity, businesses need to strengthen their fraud detection systems to catch synthesized IDs and clone voices attempting to bypass multi-layered KYC checks.

While there are telltale signs to detect deepfakes in many cases, here are three methods businesses can implement together to strengthen deepfake detection:

Anomaly detection

There are rare cases of perfect deepfakes. Even the seemingly flawless ones have subtle flaws that can be spotted through AI tools and verification systems.

Some of these anomalies include:

- Unnatural blinking, i.e. blinking too much or too little

- Out-of-sync lip and speech movement

- Inconsistent skin texture

- Distortion and flickering during head movements

- Lighting and shadow inconsistencies

- Sudden blurring, warping, or movement shift

Liveness checks

The Liveness verification check ensures that the person on the other end of the screen is a real person and not an AI-generated dupe. Passive liveness detection runs in the background without requiring user interaction, analyzing subtle details that deepfakes struggle to replicate.

| 💡 Pro tip: At HyperVerge, we highly recommend implementing single-image passive liveness checks, which include uploading a single image and nothing more! This makes the verification process super simple and effortless for the user, reducing the risk of drop-offs. |

Ongoing monitoring

Deepfake threats don’t stop at onboarding. Fraudsters may use AI-generated content to bypass initial security checks and later modify their tactics to evade detection.

As a part of KYC implementation, businesses must consistently monitor the behavioral and transaction patterns of customers to detect suspicious activities.

Some ongoing checks to detect deepfakes include:

- Periodic verification to ensure that the user’s biometric data matches their original records

- Tracking unique device characteristics through device fingerprinting

- Scans for manipulated facial or voice data in ongoing interactions

- Flags inconsistencies in tone, speech, and background noise that may indicate voice cloning

Remember, deepfake technology and deepfake creators are continuously evolving to introduce sophisticated forms of AI-generated clones in the economy.

The only way to stay ahead is by continuously updating fraud detection systems and keeping yourself abreast with the latest deepfake detection technologies.

Takeaways

The hyperrealism of deepfakes is a growing concern. Deepfakes play on the natural human tendency to trust similar faces and voices, making it easy for deceivers to deceive and manipulate.

Businesses must implement advanced identity verification and deepfake detection tools to maintain the integrity of their systems and operations. Hyperverge’s fraud prevention solutions is a robust anti-fraud solution designed to safeguard your financial assets and preserve your reputation. It’s one tool you need to prevent fraud at every customer touchpoint.