A convincing deepfake can clear a biometric selfie check in under ten seconds.

What was once a high-effort “special effect” has become a scalable, plug-and-play fraud-as-a-service (FaaS) available for a few dollars.

For businesses, the threat has moved beyond viral misinformation. Today, synthetic media is a direct weapon used to impersonate real users, bypass remote KYC, and infiltrate financial systems. In India alone, deepfake fraud attempts have surged by 280% this year, with attackers using “persona kits” to automate identity theft at machine speed.

For regulated sectors like banking, fintech, and crypto, detecting synthetic media is no longer an “extra” layer—it is a core security requirement. Relying on basic liveness detection is like bringing a lock to a cyberwar; you need a system that can analyze facial micro-movements, biometric signals, and pixel-level artifacts in real-time.

To help you secure your user journey, we’ve evaluated the 8 best deepfake detection tools in 2026, comparing their detection accuracy, deployment flexibility, and specific fit for the Indian regulatory landscape.

TL;DR:

- The Threat: Deepfakes have evolved from online misinformation to high-speed identity fraud. In 2026, synthetic media can bypass standard “selfie checks” and remote KYC systems in seconds.

- Market Impact: Global deepfake incidents have surged, with India seeing a 280% increase, making it a primary target for fintech and banking scams.

- The Solution: Traditional liveness detection (checking for a “physical” person) is no longer enough. Businesses now require a second layer of deepfake detection to analyze micro-movements, skin textures, and metadata inconsistencies.

- Top Tools: This guide evaluates 8 leading solutions, highlighting HyperVerge for KYC compliance, Sensity AI for forensics, and Pindrop for audio security.

- Compliance: While not yet explicitly mandated, RBI and MeitY guidelines are pushing Indian enterprises toward mandatory synthetic media labeling and robust anti-spoofing controls.

The Deepfake Threat Landscape in 2026

Deepfakes are no longer limited to high-effort attacks or isolated cases of media manipulation. What has changed in 2026 is accessibility. Now, deepfakes can be produced quickly, cheaply, and at a quality that is increasingly difficult to distinguish from genuine user input.

How GenAI Tools Are Making Deepfakes Easier to Create

Recent advances in generative AI have dramatically lowered the skill required to create convincing synthetic content. Consumer-grade tools can now generate realistic face swaps, voice clones, and synthetic avatars using only a short audio clip, a few images, or a brief video sample.

What once required technical expertise now often just takes a smartphone, a cloud API, or a low-cost subscription tool.

Deepfake Fraud in India: Banking, KYC & Identity Scams

The fraud impact is already measurable. Global deepfake incidents rose by nearly 245% year over year. Meanwhile, India recorded an estimated 280% increase in early 2024, making it one of the fastest-growing markets for synthetic fraud.

The Reserve Bank of India has also warned about fake videos of officials being used to spread misleading financial advice. Thus showing how synthetic media is entering both consumer scams and institutional fraud.

The pressure is strongest in digital identity systems. One U.S. industry study reported a 700% rise in synthetic identity attacks linked to onboarding fraud. Sectors such as gaming, crypto, and fintech have seen some of the sharpest increases in deepfake misuse.

Why Liveness Detection Alone Is No Longer Enough

Traditional liveness checks were designed to stop simple spoofing methods such as printed photos, masks, or replayed videos. But many current deepfakes can imitate those same prompts with enough realism to pass basic interaction checks.

The threat has also shifted toward injection attacks. This is where manipulated video is fed directly into the verification stream. Recent threat reporting has shown sharp growth in both virtual camera attacks and face-swap fraud, highlighting how quickly spoofing methods are evolving.

That is why deepfake detection is increasingly treated as a second decision layer to analyze synthetic artifacts, facial consistency, and media-level anomalies that standard liveness systems may miss.

What Is Deepfake Detection?

Deepfake detection is the process of identifying whether an image, video, audio clip, or live camera input has been artificially generated or manipulated using AI. In business environments, it helps you distinguish genuine users and authentic media from synthetic content designed to mislead systems or impersonate real people.

Most people associate deepfakes with edited videos, but the threat now extends far beyond visuals. In 2026, deepfake attacks increasingly use voice, facial movement, and visual identity to create highly convincing impersonations.

A deepfake can take several forms:

- Video deepfakes, where a person’s face, expressions, or lip movements are artificially altered

- Image deepfakes, where AI-generated faces or manipulated identity documents are presented as real

- Audio deepfakes, where cloned voices imitate real individuals in phone calls, voice authentication flows, or recorded messages

- Multimodal deepfakes, where video and audio are generated together to mimic a real person more convincingly

What makes multimodal deepfakes especially difficult to detect is that they reduce the obvious inconsistencies that older fake content often had. To detect these manipulations, modern systems analyze signals that are difficult for generative models to replicate consistently. These include:

- Facial micro-movements

- Blink patterns

- Skin texture irregularities

- Voice frequency distortions

- Speech timing mismatches

- Metadata inconsistencies

- Behavioral anomalies during live capture

For identity verification use cases, deepfake detection often works alongside liveness detection. This determines whether the person in front of the camera is physically present and interacting naturally, rather than presenting synthetic or replayed content.

| Think you can spot a deepfake? Test yourself with HyperVerge’s interactive Deepfake Game. |

How We Evaluated These Tools

To compare these deepfake detection tools fairly, we focused on how well they perform in real business environments, not just on claimed capabilities. The goal was to assess whether each tool can reliably detect synthetic fraud while fitting into live digital workflows.

Key Evaluation Criteria

Each tool was evaluated against a set of factors that directly affect adoption, performance, and fraud prevention outcomes.

- Detection accuracy: How reliably the tool identifies manipulated images, videos, or synthetic identities without creating excessive false positives.

- Real-time capability: Whether detection happens instantly during onboarding, authentication, or live verification flows.

- API and SDK availability: How easily the solution integrates into web, mobile, or enterprise identity systems.

- India compliance fit: Whether the tool aligns with regulated digital verification needs in sectors such as BFSI, fintech, and telecom.

- Audio and video support: Whether it can detect both visual deepfakes and voice-based manipulation.

- Pricing and deployment fit: Whether the product is practical for enterprise use based on scale, flexibility, and implementation needs.

Taken together, these criteria help separate tools built for standalone media analysis from those designed for fraud prevention inside digital identity journeys.

Key Features to Look for in a Deepfake Detection Tool

Not every deepfake detection tool is built to handle the same level of fraud risk. Use this checklist when evaluating a tool:

- Can it detect manipulated images, videos, and audio?: A strong solution should go beyond face swaps and identify synthetic inputs across several formats.

- Does it work in real time?: Detection should happen during live onboarding, authentication, or verification flows without slowing down the user journey.

- Does it analyze biometric signals?: Look for systems that examine blink patterns, facial micro-movements, or skin texture irregularities that are hard for AI-generated media to mimic.

- Can it compare voice and video together?: Cross-modal analysis helps detect mismatches between speech, lip movement, and facial behavior.

- Does it check frame-by-frame consistency?: Temporal analysis helps identify unnatural motion, lighting shifts, or facial distortions across video frames.

- Can it detect lip-sync mismatches?: Differences between spoken words and mouth movement remain a common indicator of synthetic video.

A tool that checks across multiple layers is usually more reliable than one that depends on a single detection method.

8 Best Deepfake Detection Tools in 2026

Deepfake volume has grown rapidly over the last few years. Synthetic video incidents have increased by over 550% between 2019 and 2024. That growth has widened the gap between basic detection systems and tools built for real-world fraud prevention, especially across identity, media, and voice workflows.

HyperVerge: Best for KYC & Onboarding Liveness

HyperVerge is a refined deepfake detection solution. Its AI models are designed to detect replay attacks, synthetic faces, and injection-based fraud during KYC journeys, while also linking face verification with document and identity checks.

Best for:

- Banks, NBFCs, fintechs, and regulated businesses running video onboarding or customer verification flows

- Enterprises that need deepfake protection built directly into identity verification journeys

Strengths:

- Its liveness engine is tested for conformance with ISO/IEC 30107-3 PAD standards, with near-zero false acceptance on spoof attacks

- HyperVerge reports 99.9% accuracy on live-person selfies, which is especially relevant as synthetic identity fraud rises

- It holds globally recognized facial recognition certifications through iBeta and NIST. This adds credibility in regulated deployments

Limitations:

- HyperVerge’s customers haven’t reported any significant limitations.

Pricing:

HyperVerge offers tailored pricing options based on the specific needs and requirements of each client. For detailed pricing information and a customized demo, sign up here.

India fit:

- Strong fit for Indian businesses because it’s built around local KYC and onboarding requirements

- Aligns well with RBI-led video verification workflows

- Regional deployment flexibility supports data residency and compliance needs

Sensity AI: Best for Visual Threat Intelligence

Sensity AI is built for forensic-level visual analysis, using an ensemble of detection models to identify deepfakes.

Best for:

- Organizations that want a forensic-level perspective on visual AI threats like government agencies and enterprises monitoring visual AI threats

- Teams that need forensic analysis of manipulated image and video content rather than onboarding-focused detection

Strengths:

- Sensity’s technology is well-regarded in research and industry

- Detects manipulated media by analyzing subtle visual artifacts and generative inconsistencies like facial geometry, biometric inconsistencies etc.

- Supports both cloud and on-premise deployment depending on enterprise needs and can screen large video libraries

Limitations:

- More specialized than plug-and-play API tools, which can make integration heavier

- Real-time streaming support is unclear; primarily designed for file-based or near real-time analysis

- Does not natively analyze audio or text-based synthetic content

- Enterprise deployment may be costly for smaller teams

Pricing:

- Pricing is custom quoted based on deployment scope

India fit:

- No India-specific compliance layer or KYC alignment

- More relevant for Indian enterprises that need high-end visual threat screening than regulated onboarding use cases

Reality Defender: Best for Real-Time Screening

Reality Defender provides API and SDK-based deepfake detection built for live environments. Its detection engine uses continuously updated AI models to identify face manipulation, lip-sync mismatches, and synthetic audio during active media streams, making it suitable for real-time screening rather than post-event analysis.

Best for:

- Teams that need fast screening across audio and video inputs at scale like contact centers

Strengths:

- Designed for speed, with near-instant scanning and low-latency response

- Covers both visual and audio-based deepfake signals in one detection layer

- Recognized in enterprise security discussions, including Gartner coverage

- Offers a free developer API with limited scans, which helps teams test deployment before scaling

- Regular model updates are designed to keep pace with rapidly evolving synthetic fraud methods

Limitations:

- Available primarily as a SaaS API, with no clear on-premise deployment option

- Built for media screening rather than deep integration into identity verification workflows

- Real-time systems can be less reliable than forensic analysis in edge cases

Pricing:

- Free tier available for up to 50 scans per month

- Enterprise pricing is custom-based on volume and deployment requirements

India fit:

- No India-specific compliance certifications

- Useful for pilot deployments or layered fraud screening alongside KYC systems

Pindrop Pulse: Best for Audio Deepfake Detection

Pindrop is built to detect synthesized, cloned, or replayed voices in live calls. It analyzes voice fingerprints and behavioral audio signals to distinguish genuine callers from synthetic speech. It integrates directly with contact center and IVR systems for real-time fraud alerts.

Best for:

- Banks, insurers, and enterprises with large call centers or fraud operations

- Businesses that rely on voice biometrics or phone-based authentication

Strengths:

- Reports up to 99% detection on known voice-cloning systems and over 90% detection on previously unseen models

- Adds voice liveness-style checks through behavioral biometrics

Limitations:

- Focused entirely on audio and does not analyze video or image-based deepfakes

- Best suited to telephony environments rather than broader digital verification workflows

- Requires integration into existing call infrastructure through SIP or IVR systems

Pricing:

- Public pricing is not listed

India fit:

- No India-specific regulatory layer, but relevant for institutions handling phone-led fraud detection

- Could become more valuable as voice-led verification expands across Indian financial services

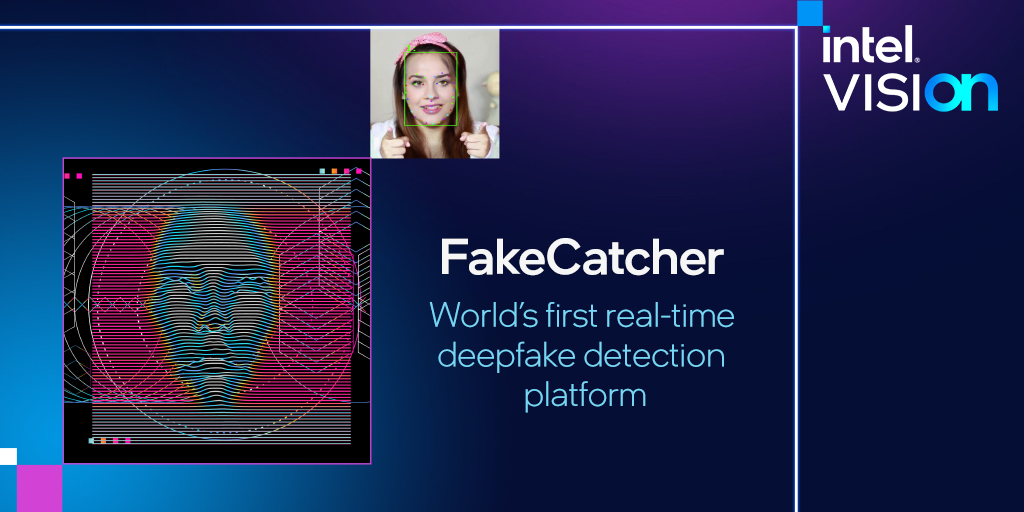

Intel FakeCatcher: Best Biological Signals Detection

Intel developed FakeCatcher as a research-led deepfake detector that analyzes physiological signals in facial video. Especially subtle blood-flow patterns that naturally occur in live human skin. The system works on the idea that synthetic faces may reproduce appearance convincingly, but often fail to replicate pulse-linked biological signals consistently across video frames.

Best for:

- High-assurance experimental environments such as national identity systems or advanced fraud research

- Organizations exploring next-generation detection methods beyond visual artifact analysis

Strengths:

- Uses blood-flow analysis, which is fundamentally different from traditional pixel-based detection

- Reported to achieve around 96% accuracy in controlled testing

- Supports near real-time inference when deployed on Intel hardware

Limitations:

- Still experimental and not available as a commercial enterprise product

- Requires stable lighting and clear facial visibility for stronger performance

- Heavy compute requirements can limit use on low-power devices

- Focused only on video and does not support audio analysis

Pricing:

- No commercial pricing is available

- Currently remains a research initiative from Intel Labs

India fit:

- More relevant as a future reference point for high-assurance identity systems than immediate business deployment

Sentinel AI: Best for Government & Enterprise

SentinelOne approaches deepfake detection as part of broader enterprise threat intelligence rather than a standalone verification product. Its security platform incorporates synthetic media signals into incident detection and response workflows. Thus helping security teams identify deepfake-related threats alongside wider cyber activity.

Best for:

- Governments, public sector organizations, and large enterprises managing advanced cyber risk

- Security teams that want deepfake awareness embedded into broader threat monitoring

Strengths:

- Connected to a wider security stack, including endpoint detection, threat intelligence, and incident response

- Can correlate synthetic media signals with other indicators of compromise across enterprise systems

- Built for environments where deepfakes may appear as part of coordinated fraud or cyber campaigns

- Strong fit for security operations centers already using AI-led threat monitoring

Limitations:

- Does not offer a dedicated deepfake API or onboarding-focused detection product

- May be too broad and expensive for businesses looking only for KYC or media screening

- Detection outputs are oriented toward cyber investigation rather than customer verification

Pricing:

- Enterprise cybersecurity pricing through custom commercial contracts

- Public pricing is not listed

India fit:

- Relevant for large financial institutions, public sector bodies, and high-security environments

- Better suited to cyber defense use cases than regulated onboarding or customer verification workflows

Hive Moderation: Best High-Volume API

Hive offers a scalable API for detecting AI-generated content across images, video, audio, text, and even music. It works like an AI content screening layer, returning confidence scores for uploaded media.

Best for:

- Social platforms, marketplaces, forums, and enterprises handling large volumes of user-generated content

- Teams that want a ready-to-deploy API without building detection models internally

Strengths:

- Covers many content types, including image, video, audio, text, and code moderation

- Frequently updated to detect newer generative AI models as they emerge

- Can sometimes identify the source model used to create synthetic content

- High throughput makes it suitable for large-scale moderation environments

- Offers browser demos and lightweight testing tools for quick evaluation

Limitations:

- Detection accuracy varies across formats, with image and video stronger than newer audio detection layers

- Operates largely as a black-box API, with limited explainability

- Sending media to external servers may raise privacy concerns in some deployments

- Human review is not built into the detection workflow by default

Pricing:

- Pay-as-you-go API pricing based on usage volume

- Team plans and enterprise contracts are available for larger deployments

India fit:

- Can be integrated into Indian digital platforms and moderation workflows

- More suitable for content screening than regulated KYC use cases

- Synthetic audio detection could become relevant as voice-led verification expands

Resemble Detect: Best for Synthetic Voice Detection

Resemble offers deepfake detection with its tool aimed at identifying synthetic and uploaded digital media. Resemble’s platform can particularly distinguish between authentic and AI-generated audio.

Best for:

It is best suited for businesses where voice trust matters directly like:

- Call centers and customer support teams

- Apps using voice authentication

- Platforms reviewing user-submitted audio content

Strengths:

- Supports cloud, on-premise, and air-gapped deployment for sensitive enterprise environments

- Fits into broader voice-security workflows because the platform also supports speech synthesis, voice biometrics, and speech-to-speech applications

- Useful where voice trust needs to be verified in real time

Limitations:

- Focused mainly on voice and speech rather than image or video deepfake detection

- Per-second pricing can become expensive at scale

- Works best when integrated through Resemble’s APIs or broader platform stack

Pricing:

- Detection starts at approximately $0.04 per second

- Volume discounts are available for enterprise deployments

- Custom enterprise plans include SLA-backed support and dedicated infrastructure options

India fit:

- No India-specific compliance layer, but on-premise deployment can support data residency needs

- Increasingly relevant for sectors such as fintech, telecom, and voice-led verification workflows

Deepfake Detection Tool Comparison Table

The table below compares the leading deepfake detection tools across the factors.

| Tool | Media type | Real-time | API | On-premise | India compliance | Pricing |

| HyperVerge | Image, selfie, live video | Yes | Yes | Regional deployment flexibility | Strong alignment with Indian KYC workflows | Custom enterprise pricing |

| Sensity AI | Image, video, 3D avatars | Not clearly positioned for live streaming | Not clearly positioned for live streaming | Yes | No specific India compliance layer | Custom enterprise pricing |

| Reality Defender | Video, audio | Yes | Yes | No clear option | No India-specific certifications | Free tier + custom enterprise pricing |

| Pindrop | Audio | Yes | Yes | Enterprise deployment dependent | No specific India compliance layer | Custom enterprise pricing |

| Intel | Video | Yes (research setting) | Yes (research setting) | No commercial deployment | Not applicable | No commercial pricing |

| SentinelOne | Synthetic media signals within cyber monitoring | Yes within enterprise security workflows | Yes within enterprise security workflows | Enterprise deployment dependent | Relevant for high-security institutions | Custom enterprise pricing |

| Hive | Image, video, audio, text, music | Yes | Yes | Enterprise option available | No specific India compliance layer | Pay-as-you-go + enterprise plans |

| Resemble AI | Audio, speech | Yes | Yes | Yes | No specific India compliance layer | $0.04/sec + enterprise pricing |

Deepfake Detection in India: Regulatory & Compliance Context

In India, deepfake detection is increasingly becoming a compliance concern. Especially for businesses that rely on remote onboarding and digital identity verification. While regulations do not yet mandate a dedicated deepfake detection layer, recent guidance from financial and technology regulators makes fraud prevention expectations much clearer.

RBI Video KYC Guidelines and Deepfake Risks

RBI requires regulated entities using Video-based Customer Identification Process (V-CIP) to conduct live, real-time customer interaction, verify original documents during the session, and maintain full recordings with secure audit trails.

The guidance does not explicitly mention deepfake detection, but it does require strong liveness assurance and robust anti-spoofing controls. As deepfake attacks become more convincing, banks and NBFCs increasingly need AI-based checks that can identify manipulated video, synthetic faces, or replay attempts during onboarding.

MeitY’s 2024 Advisory on AI-Generated Content

Ministry of Electronics and Information Technology strengthened India’s position on synthetic media in 2024. It directed digital platforms to identify and label AI-generated content.

The advisory board encourages traceability through metadata and makes it clear that synthetically generated content should be distinguishable from authentic human-created media. For businesses, this signals that tools capable of detecting or flagging AI-generated media may become increasingly relevant to future compliance expectations.

IT Act Implications for Deepfake Fraud

The Information Technology Act, 2000, already creates legal exposure for businesses when synthetic media is used for impersonation or fraud. Under Section 66C, identity theft involving digital credentials or impersonation is punishable. Meanwhile, Section 66D applies to cheating by personation through computer resources, with penalties that can extend up to three years of imprisonment.

This means a deepfake used during onboarding, account access, or digital verification can fall within existing cyber fraud provisions if used to impersonate a real person or mislead a regulated system.

Indian courts have also started treating deepfake-enabled scams as a form of cyber fraud, which increases the importance of proving that reasonable fraud controls were in place. For financial institutions, this makes detection technology more than a technical safeguard. It can also support due diligence if fraud is later investigated.

For regulated businesses, this means a successful deepfake attack may create not only fraud loss but also legal exposure if preventive controls are found to be inadequate.

What Indian Fintechs, Banks & NBFCs Need to Know

For financial institutions, the practical implication is clear. Deepfake detection should increasingly be treated as part of digital fraud controls rather than a separate experimental layer.

- Detection tools should fit directly into video KYC and onboarding workflows.

- Session logs, flags, and verification records should be retained for audit readiness.

- Detection models need regular updates as synthetic attack methods evolve.

- Compliance teams should track new guidance from RBI and MeitY closely.

As digital verification scales, the ability to prove both liveness and media authenticity is becoming central to regulatory readiness in India.

| HyperVerge helps detect spoofing, synthetic faces, and injection attacks in real time during onboarding. See how it works. |

Liveness Detection vs. Deepfake Detection: What’s the Difference?

Liveness detection verifies whether a real person is physically present during a verification session. Deepfake detection checks whether the face, voice, or media being presented has been artificially generated or manipulated using AI. In digital identity workflows, both are important because they address different levels of fraud risk.

The difference lies in the type of attack each technology is designed to stop.

- Liveness detection helps detect spoofing attempts such as printed photos, replayed videos, masks, or screen-based impersonation.

- Deepfake detection identifies synthetic manipulation such as AI-generated faces, face swaps, cloned voices, or altered live video streams.

The underlying technology also differs. Liveness detection typically relies on presence signals such as blink response, depth analysis, facial motion, or user interaction during capture. Deepfake detection analyzes synthetic artifacts, facial inconsistencies, voice anomalies, and generation patterns that are difficult to spot manually.

The threat level is also different. Basic spoof attacks usually use static or replayed media, while deepfake attacks use generative AI to imitate natural expressions, speech, and facial behavior more convincingly.

For high-risk digital onboarding, using only one layer may leave gaps. A user can appear live on camera while still presenting manipulated or synthetic media, which is why both checks increasingly work together in fraud prevention systems.

How to Choose a Deepfake Detection Tool for Your Business

The right deepfake detection tool depends on where fraud enters your workflow, how much verification risk you carry, and how quickly decisions need to happen. A solution built for regulated onboarding may not suit high-volume consumer platforms, while lightweight APIs may not meet compliance-heavy requirements.

For Banks & NBFCs

Banks and NBFCs need the highest level of assurance because deepfake detection sits directly inside regulated KYC journeys. Tools with strong liveness performance, anti-spoofing certifications such as ISO 30107, and proven identity verification experience are better suited for this environment.

Look for solutions that integrate easily with video KYC workflows, support secure audit logs, and perform reliably across commonly used Indian identity documents. Private cloud or on-premise deployment may also matter where internal compliance controls are stricter.

For Gaming & Crypto Platforms

Gaming and crypto platforms usually face high fraud volume, fast onboarding cycles, and globally distributed users. In these environments, speed matters as much as detection depth.

A suitable tool should work in real time, scale across large volumes of live traffic, and support layered fraud checks beyond face verification. Audio analysis, device intelligence, and adaptive model updates can add protection where synthetic impersonation evolves quickly.

For Marketplaces & Logistics

Marketplaces and logistics platforms often use selfie and ID checks for sellers, drivers, or delivery partners, where fraud risk is operational rather than heavily regulated.

In most cases, lightweight API-based tools are sufficient if they combine face verification with document checks and fit easily into existing onboarding systems. Simpler deployment and clear monitoring dashboards usually matter more here than enterprise-scale infrastructure.

Limitations of Current Deepfake Detection Technology

Deepfake detection has improved rapidly. But no tool can guarantee perfect detection across every format, device, and attack type. Performance often depends on media quality, attack sophistication, and how much usable signal is available during analysis.

Why Compressed Video Remains a Challenge

Compressed video often removes the subtle visual details that detection models rely on. These include texture variation, facial micro-movements, and frame-level inconsistencies.

This becomes a challenge in real-world onboarding because mobile uploads, low bandwidth connections, and platform compression can reduce the quality of facial data before analysis begins. Even strong models may lose confidence when artifacts are introduced by compression rather than manipulation.

The Arms Race: Deepfake Generators vs. Detectors

Detection systems improve by learning synthetic patterns. But generative models improve just as quickly by reducing those same signals. Newer deepfake generation models produce more natural facial movement, better lighting consistency, and stronger voice alignment. This means detectors need constant retraining to remain effective. In practice, this creates an ongoing arms race where static models become outdated quickly if they are not continuously updated.

Final Thoughts

Deepfake detection is no longer just about identifying manipulated media. For businesses, it is about preventing fraud without disrupting genuine user journeys. The right tool should match your risk level, integrate into existing workflows, and adapt as synthetic attacks evolve.

For regulated sectors, solutions that combine deepfake detection with liveness checks and strong verification controls are often the most practical choice. As fraud techniques become more advanced, businesses will need detection systems that are accurate, scalable, and built for real-time decision-making.