Fraud losses reported to the RBI surged 715% in the first half of FY2024-25, hitting a staggering INR 21,367 crore ($2.56 billion). With financial services remaining the primary target for bad actors, the industry is facing a fundamental shift in the nature of identity theft.

India’s Video KYC (V-CIP) framework was originally designed for a simpler era, one where the primary threat was a fraudster holding a physical photograph in front of a lens.

Today’s sophisticated attackers bypass the camera entirely. By injecting AI-generated video streams directly into sessions or using real-time synthetic overlays, they can easily circumvent legacy liveness detection systems.

In 2026, the critical question is no longer whether your platform has liveness detection, but whether that detection is robust enough to stop the specific deepfake vectors currently targeting Indian banks and fintechs.

This article breaks down the updated RBI guidelines on deepfake prevention, provides a framework to assess your current implementation, and explores what genuinely compliant detection looks like in the age of generative AI.

What does the RBI’s video KYC framework say about deepfake detection and compliance?

India introduced V-CIP under the RBI Master Direction DBR.AML.BC.No.81/14.01.001/2015-16, becoming one of the first countries globally to recognise video KYC as equivalent to in-person verification formally.

The framework has since been amended multiple times, each update tightening the technical requirements as the digital onboarding evolved. But the parts that matter most to deepfake detection RBI compliance are buried in the technical requirements of Paragraph 18, which governs V-CIP infrastructure, procedure, and data management.

What paragraph 18 actually says about technology?

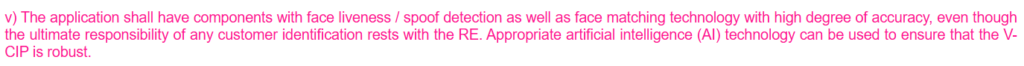

The Master Direction states explicitly that the V-CIP application must have ‘components with face liveness/spoof detection as well as face matching technology with a high degree of accuracy.’ It then adds that ‘Appropriate Artificial Intelligence (AI) technology can be used to ensure that the V-CIP is robust.’

That phrase ‘appropriate AI technology’ is significant. RBI is not prescribing a specific method. It is prescribing an outcome: robustness. And robustness, in the context of today’s deepfake threats, means something considerably more than a blink-and-turn check.

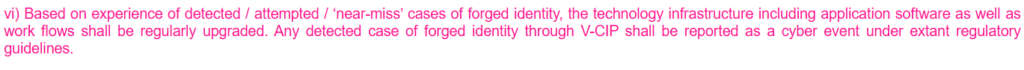

The circular also mandates that, based on detected or near-miss cases of forged identity, the technology infrastructure must be regularly upgraded. And any detected case of forged identity through V-CIP must be reported as a cyber event under extant regulatory guidelines.

These two provisions: upgrade obligation and incident reporting, are where many implementations fall short.

Who must comply?

The Master Direction applies to all Regulated Entities (REs) as defined in Paragraph 3(b)(xiv). This includes every Scheduled Commercial Bank, Regional Rural Bank, Urban Cooperative Bank, NBFC, Payment System Provider, Prepaid Payment Instrument Issuer, and other RBI-regulated financial entity.

If your organisation onboards customers remotely and uses video KYC compliance India 2026 processes, V-CIP compliance is a baseline regulatory requirement.

The infrastructure requirements (not optional)

Before a regulated entity can go live with V-CIP, the circular requires that the infrastructure meet a set of non-negotiable baseline conditions. These include:

- End-to-end encryption: The connection between the customer’s device and the V-CIP application must be encrypted to appropriate standards.

- IP-origin controls: The application must be capable of preventing connections from IP addresses outside India or from spoofed IP addresses (this is a specific technical requirement, not a best practice).

- Geo-tagging and timestamp: Video recordings must include live GPS coordinates and a date and time stamp, with sufficient quality to identify the customer beyond a reasonable doubt.

- Security testing: The infrastructure must undergo Vulnerability Assessment, Penetration Testing, and a Security Audit conducted by CERT-In empanelled auditors. These must be repeated periodically, and any critical gap must be resolved before the system goes live or remains live.

- Cloud data sovereignty: If cloud infrastructure is used, all data, including video recordings, must be transferred to the RE’s exclusively owned or leased servers immediately after the V-CIP process is completed. No data may be retained by a third-party cloud provider.

The procedure requirements: What the official must do

The circular is precise on the human side of the process. Under the RBI KYC circular liveness check requirements, V-CIP must be operated only by officials of the regulated entity who are specifically trained for the purpose. These officials must be able to:

- Carry out liveness checks and detect fraudulent manipulation or suspicious conduct independently

- Vary the sequence and type of questions to establish that the interaction is real-time and not pre-recorded

- Match the customer’s photograph on the OVD/Aadhaar and PAN against the live video feed

- Reject sessions if any prompting is observed at the customer’s end

Additionally, the Master Direction explicitly encourages REs to adopt AI and machine learning technologies for ongoing due diligence monitoring, stating that such tools can ‘support effective monitoring’ of transaction patterns.

Here are the key V-CIP requirements at a glance:

| Provision | Paragraph | What it requires | Deepfake relevance |

| Liveness + spoof detection | 18(a)(v) | High-accuracy face liveness, spoof detection, and face matching | Mandatory detection of synthetic faces and manipulated video |

| Spoofed IP prevention | 18(a)(iii) | Block connections from spoofed IPs | Injection attack defence at the network layer |

| Forged identity upgrades | 18(a)(vi) | Upgrade infrastructure on near-miss cases; report as a cyber event | Continuous improvement and reporting obligations |

| Varied liveness questions | 18(b)(iii) | Vary the question sequence to confirm real-time interaction | Procedural defence against replay + pre-recorded attacks |

| AI/ML for monitoring | Para 36 | Adopt AI/ML for ongoing due diligence monitoring | Post-onboarding deepfake and fraud monitoring |

| New technology risk assessment | Para 62 | Assess ML/TF risk from new technologies before deployment | Deepfake as an emerging technology risk |

| Passive liveness | 18(b)(i), Aug 2025 | Liveness check must not exclude persons with special needs | Implies passive AI liveness as baseline standard |

Source: RBI Master Direction DBR.AML.BC.No.81/14.01.001/2015-16, updated August 14, 2025

RBI master circular on liveness detection (active and passive)

The 2020 V-CIP compliance framework involved a basic liveness check that satisfied the literal minimum of the 2020 circular. But in 2026, it is defeated by the advanced AI spoofing and fraud attacks. Liveness detection RBI norms have evolved alongside these threats, and the compliance baseline has moved accordingly.

Now, as stated above, if a regulated entity’s V-CIP system is being targeted and those attacks are succeeding or nearly succeeding, the entity has an explicit regulatory obligation under the RBI video KYC requirements banks framework to upgrade its infrastructure. Failure to do so is a documented non-compliance.

The Master Direction does not use the terms ‘active liveness’ and ‘passive liveness’ explicitly. But what it does specify, under Paragraph 18(b), is substantive.

- First, the application must have ‘components with face liveness/spoof detection as well as face matching technology with a high degree of accuracy.’ This is a technology requirement, not just a procedural one.

- Second, the authorised official conducting the V-CIP ‘should be capable to carry out liveness check and detect any other fraudulent manipulation or suspicious conduct of the customer and act upon it.’ This means the human element is part of the liveness framework, not a replacement for automated detection.

- Third, and importantly for anti-deepfake purposes, the Direction states that ‘the sequence and/or type of questions, including those indicating the liveness of the interaction, during video interactions shall be varied in order to establish that the interactions are real-time and not pre-recorded.’ This is an active liveness requirement: it requires behavioral unpredictability that defeats replay and pre-recorded video attacks.

| The compliance question compliance teams are not asking: Most V-CIP audits verify that liveness detection is present and that the infrastructure passed its last VAPT. Very few ask: has the liveness detection been specifically tested against injection attacks and GAN-generated faces, not just print and replay attacks? If your VAPT report does not specifically reference injection attack testing, the answer is probably no. |

How deepfakes are actually attacking onboarding flows and video KYC compliance

There are two primary attack vectors that compliance teams need to understand when it comes to deepfake KYC fraud prevention RBI obligations.

- The first is face swap attacks, where a bad actor takes a legitimate person’s identity documents and substitutes a deepfake face during the live video session. Early-generation face swaps were detectable, but modern GAN-based (Generative Adversarial Network) tools produce face swaps that defeat simple liveness checks with high reliability.

- The second, and more sophisticated, is the video injection attack. Rather than appearing in front of a real camera, the attacker feeds a pre-generated or real-time synthesized video stream directly into the device’s virtual camera layer, bypassing the physical camera entirely. The detection system sees what appears to be a live video, but is receiving a manufactured feed.

Both attack types are specifically relevant to the Master Direction’s requirement that the application ‘prevent connection from IP addresses outside India or from spoofed IP addresses’ and maintain ‘end-to-end encryption of data between customer device and the hosting point of the V-CIP application.’

These provisions were designed partly with injection-style attacks in mind.

The IT Act’s ‘reasonable precautions’ standard

Paragraph 18(b)(xiii) of the Master Direction states: ‘All matters not specified under the paragraph but required under other statutes such as the Information Technology (IT) Act shall be appropriately complied with by the RE.’

Section 43A of the IT Act requires organisations handling sensitive personal data to implement and maintain ‘reasonable security practices and procedures.’ What constitutes ‘reasonable’ evolves with the threat landscape.

A court or regulator assessing whether an institution took reasonable precautions against a deepfake-enabled identity fraud in 2026 would apply a very different standard than they might have in 2021.

This is why the compliance case for GAN-level deepfake detection does not require an explicit RBI circular to be compelling.

Compliance self-assessment: Is your video KYC deepfake-ready?

Work through the following questions against your current V-CIP infrastructure to map the VCIP guidelines deepfake requirements:

Infrastructure

- Is your V-CIP technology infrastructure housed in your organisation’s own premises? ☐ Yes ☐ No ☐ Partially

- If you use cloud deployment, does ownership of all data, including video recordings, rest exclusively with your organisation, and is data transferred to your server immediately after each V-CIP session? ☐ Yes ☐ No ☐ Not Sure

- Does your application block connections from IP addresses outside India and from spoofed IP addresses? ☐ Yes ☐ No ☐ Not Sure

- Have you conducted Vulnerability Assessment, Penetration Testing, and Security Audit by a CERT-In empanelled auditor? ☐ Yes ☐ No ☐ In Progress

Liveness and deepfake detection

- Does your V-CIP application include automated face liveness detection, not just face presence detection? ☐ Yes ☐ No ☐ Not Sure

- Does your liveness detection system specifically address video injection attacks? ☐ Yes ☐ No ☐ Not Sure

- Does your system have the capability to detect GAN-based or AI-generated face swaps, distinct from basic spoofing? ☐ Yes ☐ No ☐ Not Sure

- Are the questions and behavioral prompts used during V-CIP sessions varied, as required to prevent pre-recorded or replay attacks? ☐ Yes ☐ No ☐ Partially

Process and governance

- Do you have a documented process for logging detected, attempted, and near-miss cases of forged identity during V-CIP? ☐ Yes ☐ No

- Does your technology infrastructure get reviewed and upgraded in response to those logs? ☐ Yes ☐ No ☐ Ad Hoc

- Are forgery cases reported as cyber events under applicable RBI guidelines? ☐ Yes ☐ No ☐ Not Consistently

- Are all V-CIP accounts subjected to concurrent audit before being made operational? ☐ Yes ☐ No ☐ Partially

| Scoring guidance: If you answered ‘No’ or ‘Not Sure’ to three or more questions in the ‘Liveness and Deepfake Detection’ section, your current V-CIP implementation may not meet the effective standard for deepfake detection RBI compliance. If you answered ‘No’ or ‘Not Sure’ to two or more questions in the ‘Infrastructure’ section, your implementation may not meet the baseline technical requirements of Paragraph 18(a), regardless of deepfake considerations. |

What does compliant deepfake detection look like in practice?

The Master Direction doesn’t prescribe a specific technical method for RBI video KYC deepfake guidelines. It does, however, mandate high accuracy, regular upgrades, and a documented audit trail.

In practice, that means passive liveness with GAN artefact analysis as the primary layer, with active gesture prompts as a backup. It also means injection attack detection at the stream level, not just IP filtering.

Deepfake detection must happen within the live session itself. Post-session analysis doesn’t satisfy the ‘seamless, live’ requirement under Paragraph 18. Every session needs a logged record: liveness score, face match score, GPS coordinates, timestamp, and the conducting official’s ID.

On model updates, quarterly is the floor. Deepfake tools evolve fast, and a model benchmarked in 2024 could have real blind spots by 2026.

How does HyperVerge support RBI V-CIP compliance?

Is your compliance team able to keep pace with every RBI revision? Do they find it hard to decode the RBI video KYC requirements banks for your tech stack? HyperVerge can help flip that.

HyperVerge’s Video KYC solution covers the full V-CIP workflow: CKYCR record management, Digilocker integration, KRA verification, and liveness detection built for the current threat environment.

One SDK, 100+ API integrations, live in under a week. Your team stays audit-ready without having to rebuild from scratch every time the circular updates.

Schedule a demo with HyperVerge’s Video KYC team today.