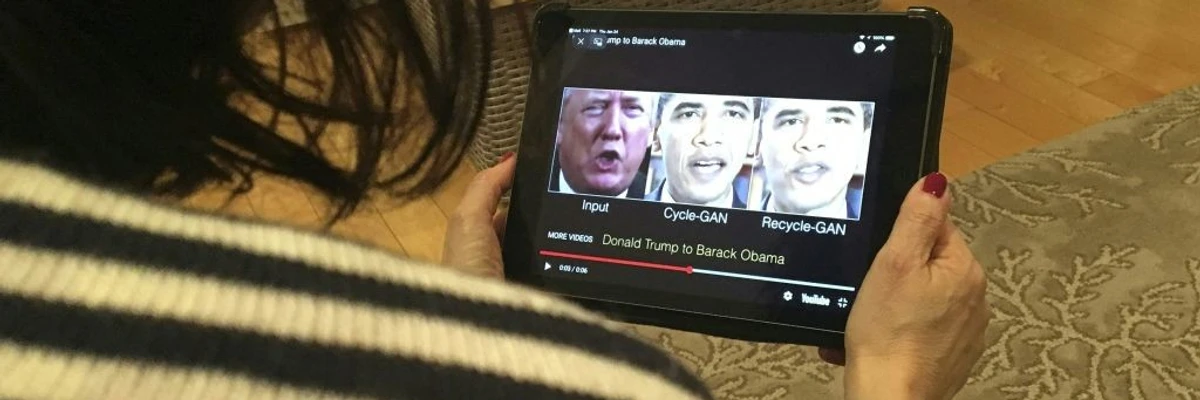

Have you heard about the celebrity deepfakes that forced stars to seek court orders for their own safety?

This is not a distant Hollywood problem. The same technology that creates those fake videos is now in the palm of your hand, generating hyper-realistic AI selfies.

AI selfie generators are trained on millions of human photos, learning every detail of our skin, expressions, and how light falls on a face. Result? Images as real as photographs!

In this guide, we throw light on how these AI tools work, reveal the most effective detection technologies available today, and provide a clear framework for businesses to protect their platforms and customers from digital impersonation and AI selfie fraud.

How do AI selfie generators actually work?

AI selfie generators create lifelike human portraits by combining data-driven learning, mathematical modeling, and image synthesis.

Through deep learning, they build a multidimensional representation of facial characteristics and then use specialized architectures, primarily Generative Adversarial Networks (GANs), Diffusion Models, and Transformers, to generate new, photorealistic selfies.

- Learning facial patterns

The process begins with collecting large datasets containing diverse faces labeled by attributes such as age, gender, and lighting.

Deep neural networks analyze these examples and extract visual features layer by layer, from basic pixel-level elements like color gradients and edges to complex concepts like facial expressions or proportions. This hierarchical learning builds a mathematical ‘latent space’ that represents how different faces relate to one another.

- Generative Adversarial Networks (GANs)

GANs form the foundation of most early AI selfie generators. They consist of two neural networks: a generator and a discriminator that compete in a feedback loop.

- The generator creates synthetic images starting from random noise

- The discriminator evaluates each image and classifies it as real or fake based on the dataset it was trained on

As training progresses, the generator becomes better at producing images that can fool the discriminator. This adversarial process drives both networks to improve until the generated selfies appear indistinguishable from authentic photographs.

GANs are particularly effective at capturing fine facial details: pores, reflections, hair texture, and achieving symmetry. However, they can sometimes produce subtle distortions when pushed beyond trained data boundaries, which has led to newer architectures like diffusion models for improved consistency.

- Diffusion models

Diffusion models generate images by reversing the process of adding noise. They start with a field of random pixels and gradually ‘denoise’ it through hundreds or thousands of small refinement steps.

Each denoising step corrects and sharpens visual details, ensuring pixel-level accuracy. This iterative approach allows diffusion models to produce exceptionally clean and high-resolution selfies. It also enables more creative control, such as adjusting lighting, emotion, or background during generation

The success of open-source frameworks like Stable Diffusion popularized this technique, making diffusion-based systems the preferred choice for realistic and editable image generation.

- Transformers for visual understanding

Transformers, originally developed for natural language processing, are now adapted for image generation. They treat images and text as sequences of data tokens.

When generating selfies, transformers interpret descriptive input (for example, ‘a smiling woman under natural light’) and map it to corresponding visual features. Using self-attention mechanisms, they maintain context across all parts of the image, ensuring alignment between facial features, lighting, and expression.

- Output and post-processing

After generation, the system refines geometry, color balance, and facial alignment to achieve natural proportions. The result is a synthetic selfie that mirrors human realism yet originates entirely from mathematical learning.

By merging these architectures, AI selfie generators have reached a level of precision once possible only through photography.

| 😀Fun Fact: About 70% of images you scroll through on social media might be created with AI tools like Midjourney or DALL·E, showing how much AI is shaping the visuals we see every day. |

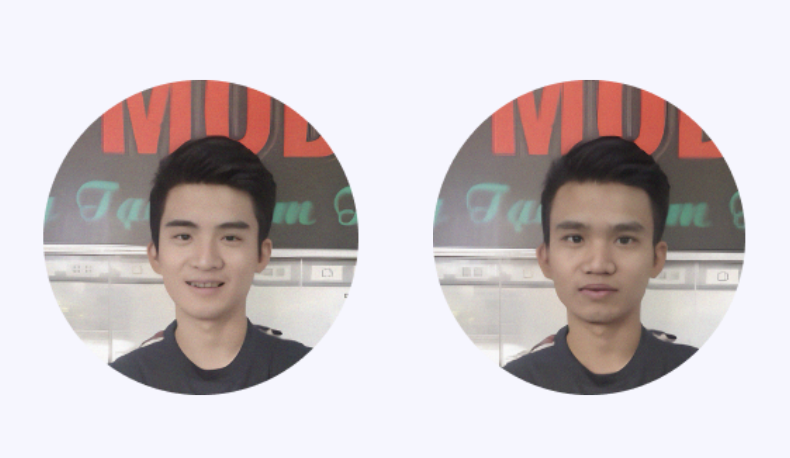

Real vs fake selfies examples

AI-generated selfies have reached a point where distinguishing between real and synthetic images can be extremely difficult, even for the human eye.

Below are examples comparing fake (left) and real (right) selfies:

Here’s a fun game for you! Can you spot the deepfakes from the images? Give it a try!

Early deepfake models often struggled with artifacts: uneven lighting, mismatched eyes, or blurred edges. Today’s GAN and diffusion-based models have dramatically improved, producing near-perfect human likenesses with lifelike details.

As AI blurs the line between real and synthetic identities, liveness detection and deepfake-resistant verification become crucial for financial, legal, and social platforms. Understanding what makes an image “real” is the first step toward securing digital trust.

Why are AI-generated selfies a risk to digital identity systems?

AI-generated selfies are not a threat because they can easily deceive both human agents and automated systems. AI selfie risks go beyond.

What are we talking about? Read below:

- Deceiving liveness detection systems

Many digital onboarding systems rely on active liveness checks (instructing users to blink or turn their head). AI-generated video can now replicate these actions easily, creating a moving image that appears to follow commands. This bypassing renders the primary security layer ineffective.

Also, many systems have workflow gaps that AI-generated selfies can exploit. For instance, a process might only ask for a single static selfie without a follow-up liveness check. Another might fail to properly validate an image’s source, letting an AI-generated selfie be uploaded as if it were a real-time photo. These onboarding weaknesses are especially prevalent in organizations that prioritize user convenience over security.

The threat further evolves as generative AI models learn from the very detection mechanisms designed to stop them. This creates a cycle where security technology must constantly evolve just to keep up.

- Poisoning biometric databases

Digital identity systems rely on the uniqueness of biometric data, such as facial features. AI-generated selfies corrupt this process at its source. If a system accepts a synthetic image as a genuine biometric sample, the entire identity file becomes compromised. This creates a ‘poisoned’ dataset where fake and real identities are mixed, and the long-term consequence here can be severe.

Once a synthetic face is enrolled in a database, it can be reused to apply for services, open financial accounts, or create fraudulent profiles across multiple platforms. This undermines the integrity of national ID databases, financial KYC (Know Your Customer) records, and employee verification systems.

Cleaning these systems requires costly and complex forensic analysis to identify and purge synthetic entries, a challenge that grows with each successful deception.

- Scaling synthetic identity fraud

Traditional identity fraud is often limited in scale, requiring the manual collection of stolen photos or documents. AI generation removes this bottleneck.

Malicious actors can now create thousands of unique, high-quality synthetic selfies on demand, each tied to a fabricated identity. This automation allows for AI selfie fraud on an industrial scale. For instance, a criminal organization could use this method to create countless fake accounts for a coordinated loan application scam, overwhelming a bank’s onboarding system with seemingly legitimate applicants.

This kind of easy scalability makes AI-generated selfies a tool for large-scale synthetic identity fraud. The economic impact of such scalable attacks can be devastating for businesses and governments, far exceeding the losses from individual cases of identity theft.

- Undermining remote verification trust

As AI-generated selfies and fakes become more common, the confidence in a simple photo or video call as proof of identity diminishes. Now, this is particularly damaging for industries that depend entirely on remote onboarding, such as digital banking, telehealth, and the gig economy.

In the future, these organizations could be forced to question the reliability of their entire customer AI selfie verification framework.

- Privacy and consent risks with synthetic faces

Synthetic faces are often made using models trained on huge datasets of real human photos, usually without those individuals’ knowledge or consent. This means a person’s likeness could be partially used to create a fictional identity for fraud. What’s more, once a synthetic face is used to create a digital identity, it can be copied and reused across the internet with no legal recourse.

Read further to understand how you can mitigate these risks with reliable AI image detectors.

Top AI image detectors 2025 (reviewed and tested)

If you want to detect AI selfies, here’s a quick table of the best AI image detectors in 2025 at a glance:

| Platform | USP | G2 Rating | Pricing |

| HyperVerge | AI-powered fraud detection suite that includes forgery checks, deepfake image analysis, and liveness detection. | 4.7 out of 5 stars | Starter, Grow, and Enterprise plans suitable for all business types and sizes |

| Winston AI | Identifies the specific AI model used for generation, going beyond a simple yes/no answer. | 4.4 out of 5 stars | Has free pricing alongside plans starting at ~$5 |

| WasItAI — AI Image Detector | Offers a no-fuss, instant check to spot fakes directly from your browser in seconds. | – | Basic starts at $4 |

| Hive Moderation | A multi-layered system that scans for harmful content and AI generation simultaneously. | 4.7 out of 5 stars | Custom pricing |

| AI or Not | Authenticates images, videos, and audio to verify real from fake across multiple media types. | – | Free + Paid (Base at $5/Month) |

| Illuminarty | Pinpoints the exact areas within an image that were generated by artificial intelligence. | – | Free + Paid ($10/Month) |

| Hugging Face | Provides immediate, basic detection that is openly accessible and completely free for everyone. | Free |

Real-world implications and use case

HyperVerge’s team has curated some case studies to help you understand the real-life impact of AI-generated selfies.

Case study 1: AI-generated celebrity selfie scam

A French interior designer was deceived into transferring $850,000 over 18 months to a scammer posing as actor Brad Pitt.

The imposter contacted her via social-media DMs, claimed to be Pitt through a fake message from his ‘mother,’ and sent AI-generated selfies of Pitt holding notes addressed to her. She believed he had kidney cancer and needed money, and ignored her daughter’s warnings until the scam was revealed. The actor’s official representative highlighted that Pitt does not maintain social-media accounts, making any direct outreach impersonation.

Here’s another case study: In South Korea, a woman in her 50s was defrauded of nearly 500 million KRW (about USD 350,000) after scammers used AI-generated selfies to impersonate popular actor Lee Jung Jae.

The group contacted her through social media, sharing AI-generated selfies and a forged ID to appear genuine. Over time, the scammer built emotional trust, calling her affectionate names and promising a private meeting with the actor through a fake ‘business executive.’ The victim was gradually persuaded to transfer large sums over six months. This case, too, illustrates how AI selfies can weaponize realism to enable large-scale financial fraud.

Case study 2: AI-generated passport passed KYC

A Polish engineer used generative AI to produce a counterfeit passport and a matching selfie that passed at least one automated KYC provider and allowed account opening on a crypto platform in independent tests and demonstrations. The incident exposed how current ID-plus-selfie flows can be bypassed by synthetic documents plus AI portraits.

There are countless such cases across industries, including cryptocurrency, finance, and more, that face real repercussions of AI-generated selfies and images. Fortunately, the governments across the globe are reacting fast to this new challenge.

For instance, on May 19, 2025, President Trump signed the TAKE IT DOWN Act into law, criminalizing the publication of non-consensual intimate imagery, including AI-generated deepfakes. This marks the first U.S. law to directly regulate AI-generated content of this kind.

Across states, several new measures are reinforcing this federal stance. Arizona’s HB 2394 allows citizens to seek legal action against unauthorized digital impersonations that risk personal or professional harm. New Hampshire’s HB 1432 makes the malicious creation or sharing of deepfakes a Class B felony. New Jersey’s A3540 adds civil and criminal penalties for deceptive AI media, while Texas’s SB 2373 targets financial exploitation using AI-generated images or phishing content.

Future trends

Based on an analysis of expert commentary across the cybersecurity and digital identity sectors, below are some key trends shaping the future of AI-generated selfies and deepfakes:

- Future deepfakes will not be static photos. They can be dynamic videos that can mimic live interaction, such as blinking or answering verification questions in real-time, making them far more deceptive

‘AI will only get more powerful, faster, or smarter… We’ll need to upskill people to make sure that they are not victims of the systems’ —Tomaz Levak, founder of Switzerland-based Umanitek

- To counter perfect visual fakes, security will increasingly rely on behavioral biometrics. This means verifying a user by their unique patterns, such as how they hold their phone or swipe the screen, which are difficult for a deepfake to replicate

‘By unifying signal analysis with emotion and behavioral consistency checks, we stop deepfakes in real time and make the outcome explainable and deployable in the most demanding environments,’ —Rana Gujral, CEO of Behavioral Signals.

- AI might enable the creation of personalized avatars that evolve based on user interactions. These avatars could serve as digital representations across various platforms, from social media to virtual environments

‘The use of AI avatars by companies like Klarna, Zoom, and UBS is the latest evolution in the broader enterprise shift toward generative AI interfaces,’ —Brian Jackson, Principal Research Director at Info-Tech

| 👁️Did You Know? Since 2022, over 15 billion AI-generated images have been produced! |

How can businesses safeguard against the risk of deepfakes and AI-generated selfies?

A reactive security posture is no longer viable. Businesses today need proactive, technology-driven strategies to protect their operations and customer relationships from AI selfie fraud.

Here are a few top strategies:

- Deploy AI-powered forgery and deepfake detection: Standard verification systems often fail against AI-generated synthetic media. Businesses need specialized deepfake tools and algorithms that can identify digital forgeries.

- Implement multi-factor verification processes: Relying on a single verification method creates a vulnerability. A more secure model combines multiple independent checks, like matching a live selfie to a government-issued ID document and then cross-referencing that data with behavioral analytics. This approach ensures that even if one factor is compromised, others maintain security.

- Adopt enterprise-grade face-match and forgery pipelines: Today, consumer-grade facial recognition is insufficient for enterprise security. Businesses require industrial-strength pipelines built for accuracy and scale. These integrated systems handle liveness detection, face matching, and deepfake analysis in a single, continuous process.

- Utilize passive liveness checks for frictionless security: The most effective security is also the most user-friendly. Passive liveness technology requires only a simple selfie, with AI determining liveness in the background without user action. This smooth experience improves completion rates while simultaneously catching more fraud, creating a direct positive impact on the business’s bottom line.

For organizations serious about protecting customer trust and brand reputation, HyperVerge delivers a proven, real-time solution.

So, do you want to put HyperVerge’s capabilities to the test? If yes, then book a demo today!