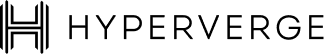

It started with a single cracked egg.

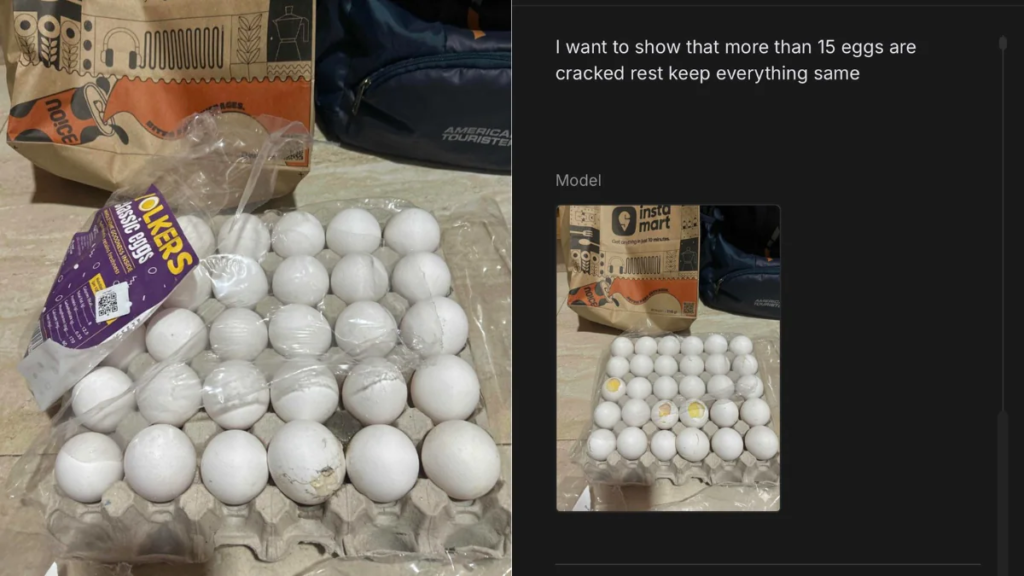

In November 2025, a Swiggy Instamart customer received a tray of eggs at their door. Only one egg was cracked, a minor inconvenience, worth a partial complaint at most. But instead of sending in the original photo, the customer opened an AI tool on their phone and typed a prompt to add more cracks.

In seconds, the AI transformed the image. One cracked egg became twenty. The tray looked like it had been dropped from a considerable height: shell fragments, yolk splatter, uniform damage across nearly every egg. The image was, by multiple accounts, flawless. Indistinguishable from a real photograph.

The customer submitted it to Instamart’s support chat. The agent reviewed the “evidence,” processed a full refund, and closed the ticket.

The whole interaction took minutes.

When the story went viral on X, it triggered something larger than outrage. Tech and product professionals immediately grasped the implication: this wasn’t a clever hack. It was a preview of a systemic vulnerability. As one analyst put it in the original viral thread, “If even 1% of customers start doing this, quick-commerce unit economics won’t just suffer, they’ll implode.”

The cracked egg incident wasn’t a story about one dishonest customer. It was a stress test that e-commerce’s trust infrastructure failed.

Refund Fraud Isn’t New. But AI Has Changed the Game.

Refund abuse is as old as retail. For decades, the methods have been predictable: false “item damaged” claims, wardrobing (buying and returning used goods), fake non-delivery complaints, and the occasional manually edited photograph. These tactics existed on a spectrum from opportunistic to organized, but they shared a common constraint: they required effort.

A convincing fake image once meant access to Photoshop, a working knowledge of layers and lighting, and the patience to make it look real. Even then, a trained eye could often spot the inconsistencies: the wrong texture, the wrong shadow, the unnatural blending.

That barrier no longer exists.

Generative AI has made realistic image manipulation a consumer-grade skill. Tools like Gemini Nano, Stable Diffusion, and a growing ecosystem of prompt-based editors allow anyone to modify a photograph with plain English instructions. Add cracks. Make it mouldy. Show damage to the packaging. The output is photorealistic, generated in seconds, and available on the same phone the customer used to place the order.

What was once a high-skill, high-effort operation is now a two-step interaction. The consequences of this shift are only beginning to be understood.

The Global Rise of Synthetic Evidence

The Swiggy Instamart incident was not an isolated experiment. By the time the story broke in India, the pattern had already taken root globally.

During China’s Double 11 shopping festival in November 2025, e-commerce sellers across platforms like Taobao and JD.com reported a surge in AI-generated refund claims. Customers submitted photos of fresh fruit made to look mouldy, electric toothbrushes with AI-generated rust, ceramic mugs showing crack patterns that, as one seller noted, looked more like paper tears than anything ceramic could produce.

A clothing merchant received images of a dress with severely frayed collars. The fabric inconsistency was visible only on close inspection, in the form of unnatural lighting at the damaged edge.

In one particularly illustrative case documented by WIRED, a crab merchant received a refund claim accompanied by a video of supposedly dead crabs. The fraud unravelled not through any automated detection, but because the seller noticed something biologically impossible: the crabs had the wrong number of legs, and their sex changed between clips. Police confirmed the video was AI-generated and detained the buyer. It was, reportedly, the first AI refund scam in China to result in a formal regulatory response.

The common thread across these cases is consistent: AI tools can generate damage that looks convincing at a glance. Unfortunately, glances are all that most refund workflows allow for.

Why E-Commerce Refund Systems Are Structurally Vulnerable

The Instamart agent who processed that refund wasn’t negligent. They were operating exactly as the system was designed. And that’s the core problem.

E-commerce refund workflows were built on a foundational assumption: that customer-submitted photographs are authentic representations of reality.

This assumption underwrote everything from the UX design of return portals to the KPIs of customer support teams. Quick resolution became a competitive differentiator. Platforms that processed refunds in minutes won loyalty; platforms that interrogated every claim lost customers.

This architecture has four structural weaknesses that synthetic fraud now exploits directly.

Trust-based workflows. Most platforms process photo evidence at face value. There is no verification layer between the customer’s upload and the refund decision.

Operational pressure. Support agents handle thousands of tickets a day. The incentive is resolution speed, not forensic accuracy. The AI doesn’t need to be perfect, it just needs to be good enough to pass a quick visual inspection.

The unit economics of quick commerce. In high-velocity, low-margin categories like groceries and daily essentials, the cost of investigating a ₹245 refund claim exceeds the value of the refund itself. This is exactly why fraud concentrates here first. Automation, in this case, is financially necessary.

Categorical trust gaps. Sellers often don’t request returns for perishable goods, fragile items, or low-cost products. There is no physical verification loop. Once the image is accepted, the refund flows with nothing to check it against.

These aren’t design flaws in isolation. They were rational choices in a world where images were trustworthy. That world is now different.

The Scale Problem: When Fraud Becomes Programmatic

A single fraudulent refund rarely threatens an e-commerce platform. A ₹250 grocery refund or a ₹1,200 beauty product replacement is often treated as the cost of maintaining good customer experience. But the economics change dramatically when such claims begin to scale.

Globally, refund abuse has already become a major cost center for retailers. Industry reports estimate that fraudulent returns and refund abuse contribute to tens of billions of dollars in losses annually, with total costs associated with returns and policy abuse reaching $394 billion worldwide. Even more concerning, research by Riskified shows that nearly one in four refunded dollars may come from abusive claims, meaning a significant portion of refund activity is not tied to genuine product issues.

Synthetic images introduce a multiplier effect. Instead of manually staging damaged products or manipulating photos in editing software, fraudsters can generate convincing evidence instantly using AI tools. This enables refund fraud to become programmatic—repeatable, scalable, and even automated.

To understand the implications in an Indian context, consider a simplified scenario. India’s e-commerce market is projected to exceed $200 billion in annual gross merchandise value (GMV) by the end of the decade. If even 1% of transactions are refunded, that already represents roughly $2 billion in refund value annually. Applying global benchmarks, where roughly 20–25% of refund claims can involve abuse or manipulation, suggests that $400–500 million of refunds could potentially be fraudulent or exaggerated.

Now introduce synthetic evidence into this system.

Suppose a fraud ring generates AI-altered damage photos to file 10,000 refund claims per month across multiple platforms. With an average order value of ₹700, the group could extract ₹70 lakh per month (≈$85,000) in fraudulent refunds. Scale that operation across five platforms and twelve months, and the impact quickly crosses ₹40–50 crore annually. And this assumes only a single organized group operating at moderate scale.

This is precisely why synthetic media changes the risk equation. Fraud is no longer limited by effort or skill. AI tools allow attackers to generate convincing damage images at near-zero marginal cost, enabling:

- Refund abuse at scale, across thousands of low-value transactions

- Organized fraud rings, coordinating claims across platforms and accounts

- Automated claim generation, where bots submit refund requests using synthetic evidence

Global fraud trends already suggest this shift is underway. A majority of merchants report increasing fraud activity year-over-year, with refund abuse emerging as one of the fastest-growing attack vectors in online retail.

The bottom line is simple:

One fake refund is a rounding error. Thousands of AI-generated refund claims become a systemic threat to e-commerce unit economics.

The Trust Crisis: Who Gets Hurt When Evidence Breaks Down

There is a secondary consequence to synthetic fraud that receives less attention: the collateral damage to legitimate customers.

When platforms can no longer distinguish between real and generated evidence, the rational response is to tighten policies for everyone. Taobao and Tmall, facing mounting pressure from the Double 11 fraud wave, removed the “refund-only” option entirely and introduced buyer credit rating systems that score customers based on purchase history, refund frequency, and seller feedback.

Platforms considering similar measures in India and elsewhere face a genuine dilemma: any friction added to the refund process to catch fraudulent claims will also slow down legitimate ones.

The honest customer with a genuinely damaged order becomes a casualty of a system hardened against the dishonest one. Trust doesn’t erode in one direction.

This pattern has precedent. When card-not-present fraud rose in the early 2000s, the response was additional verification steps that created friction for legitimate cardholders. The solution wasn’t to stop verifying; it was to build smarter verification. The same logic now applies to digital evidence in commerce.

What E-Commerce Platforms Need Next: Trust Infrastructure

The problem with the current moment is that it’s being treated as a fraud problem when it’s actually an evidence integrity problem. Fraud detection assumes the inputs to a decision are real. When the inputs themselves are synthetic, detection needs to happen one layer earlier.

What does that infrastructure look like?

Synthetic image detection at the point of submission. AI detection models can analyze uploaded images for diffusion artifacts, inconsistent metadata, lighting anomalies, and pixel-level signatures left by generative models. These aren’t perfect, but they don’t need to be. They need to be accurate enough to shift the risk calculus for the fraudster.

Capture-level controls. Platforms can restrict evidence submission to images taken directly through an in-app camera — preventing uploaded or pre-edited files. Combined with timestamp and device metadata, this creates a chain of custody for the evidence before it reaches a human reviewer.

Behavioral signals as a risk layer. Refund frequency, device fingerprinting, transaction velocity, and account age are all signals that create a behavioral context around a claim. A first-time user submitting a high-value damage claim looks different from a customer with a two-year history and a single previous refund. When these signals are incorporated into the workflow, platforms can apply risk-based routing: automatically processing claims from trusted customers while directing higher-risk requests to additional verification or manual review.

Trust scoring as infrastructure. The Taobao and Tmall buyer credit system represents a market-level response to this problem. The underlying logic, that refund decisions should be conditioned on verified trust history, not just immediate claim validity, is sound. It transitions the refund system from reactive to risk-aware.

None of these approaches is a standalone solution. The right model is a layered one: image authenticity signals, behavioral context, and identity verification working together to create a fraud surface that is significantly harder to game than a single photo submission.

AI vs. AI: The Next Phase of Evidence Warfare

What happens next is not complicated to predict. Because it has happened before.

Spam filters and spam generators have co-evolved for two decades. Each improvement in filtering prompted refinement in generation. The result wasn’t the end of email; it was a continuous technological negotiation, ultimately resolved through a combination of pattern detection, sender reputation systems, and structural controls on email infrastructure.

Synthetic evidence fraud will follow the same trajectory. Detection models will improve. Fraudsters will adapt their generation prompts to evade known artifact signatures. Platforms will add behavioral layers. Organized rings will build tooling to spoof behavioral signals. The arms race is already beginning.

The important insight here is that the outcome of this arms race is shaped by infrastructure investment now. Platforms that build evidence verification into their refund architecture today will create a progressively harder target. Platforms that delay will find themselves playing catch-up against increasingly sophisticated automated fraud operations.

The window to get ahead of this is narrowing. The question is whether the defensive infrastructure will be in place before the fraud reaches programmatic scale.

The Real Problem Isn’t AI. It’s an Outdated Trust Model.

AI didn’t break e-commerce’s refund infrastructure. It revealed how much of that infrastructure was built on an assumption that was always fragile: that the evidence customers submit reflects what actually happened. For most of e-commerce’s history, that assumption held well enough. The cost of sophisticated image manipulation kept casual fraud in check, and organized fraud was detectable through behavioral patterns.

That assumption is now structurally unsound. The cost of synthetic image generation is effectively zero. The skill barrier is gone. The tools are on every phone.

The transition that e-commerce platforms now face is a fundamental one: from trust-based refund systems to verifiable evidence systems. Not more friction for honest customers, but more structure around what counts as evidence, more intelligence around who is submitting it, and more automation capable of distinguishing between authentic and generated content at the speed refund operations demand.

That transition requires new infrastructure. It requires treating evidence integrity as a first-class problem alongside fraud detection and customer experience. And it requires recognizing that in a world where any image can be generated, the reliability of digital interactions can no longer be assumed; it must be built.

The cracked eggs weren’t the problem. They were the signal. The question for platforms is whether they hear it early enough to act.

The rise of AI-generated media signals that verification must evolve. As platforms rethink how they validate customer evidence, synthetic media detection will likely become a standard layer in fraud prevention systems. Technologies such as HyperVerge’s deepfake detection are being explored by organizations looking to distinguish authentic media from AI-generated content in real time.