India’s financial system runs on digital trust. Crores of people open bank accounts, apply for loans, and complete KYC from their phones every single day.

But that trust is now under attack.

Deepfake cases in India have jumped 550% since 2019, with projected losses hitting ₹70,000 crore. The RBI reported fraud losses of ₹21,367 crore in just the first half of FY2024–25, an eightfold increase year-on-year. A growing share of that fraud involves AI-generated fake identities.

For banks, NBFCs, and fintechs running digital onboarding, deepfakes are no longer something to monitor from a distance. This guide answers what is a deepfake, the mechanism behind it, and how you can safeguard your business against this threat.

TL;DR: Deepfake Threats & Detection in India (2026)

The Crisis: Deepfake-enabled fraud in India has surged by 550% since 2019, with projected financial losses reaching ₹70,000 crore. The RBI reported an eightfold year-on-year increase in fraud losses (₹21,367 crore) in the first half of FY2024–25, driven largely by AI-generated synthetic identities.

Core Technologies Used by Fraudsters:

- GANs & Diffusion Models: Used to create hyper-realistic “synthetic identities” that pass liveness checks.

- Real-time Face-Swaps: Used to spoof Video KYC (V-CIP) by overlaying stolen identities onto fraudsters during live calls.

- Voice Cloning: Mimicking customer voices to bypass OTPs and authorize illegal transfers.

2026 Regulatory Update

The IT Amendment Rules 2026 (effective Feb 20, 2026) formally define “synthetic media.” Major platforms must now remove flagged deepfakes within 3 hours and non-consensual intimate content within 2 hours or risk losing safe harbor protection under Section 79 of the IT Act.

Detection & Defense: Modern BFSI defense relies on Passive Liveness Detection, which analyzes biological signals (blood flow, micro-expressions) and Injection Attack Detection to ensure video feeds are coming from a live camera, not a pre-recorded file.

How are deepfakes made?

Before we get into how deepfakes are made, here’s a quick recap on what is a deepfake.

Imagine someone takes photos, videos, and voice recordings of a real person, feeds them into an AI model, and out comes a fake version of that person: one that looks real, sounds real, and can say or do things the real person never did. That output is a deepfake. The name combines deep learning (the AI method used) and fake (the output).

But not all deepfakes are built the same way every time. Fraudsters pick the method that fits the attack. Let’s read about them below:

1. Generative Adversarial Networks (GANs)

GANs use two competing neural networks; one tries to create convincing fake content, the other tries to detect it. They run against each other in a loop. Over thousands of rounds, the content becomes indistinguishable from the real thing.

This adversarial training process is behind most high-quality deepfake videos used in KYC fraud today.

In practice: A fraudster trains a GAN on someone’s social media photos. Within hours, the model can generate that person’s face at different angles, lighting conditions, and expressions, enough to pass a liveness check.

2. Diffusion models

Diffusion models work differently. They start with real images, add random noise until the image is unrecognisable, then learn how to reverse that process to reconstruct a new, photorealistic image. Tools like Stable Diffusion use this method and are available as free, open-source software.

In practice: Fraudsters use diffusion models to generate entirely new face images of people who don’t exist, paired with forged documents. These ‘synthetic identities’ are then used to open accounts or apply for credit.

3. Face-swap apps

Face-swap tools overlay one person’s face onto another’s video, in real time. These use encoder-decoder architectures that map facial features from a source face onto the geometry of a target video, matching lighting and movement automatically.

In practice: During a live video KYC call, the fraudster’s real face is replaced on-screen with a stolen identity in real time. The bank employee or automated system sees the wrong face.

4. Voice cloning

AI voice synthesis tools extract the acoustic patterns from a short audio sample and use them to generate speech in that person’s voice.

In practice: A fraudster records 30 seconds of a customer’s voice from a previous call. They clone it and call the customer’s bank, impersonating the account holder, to extract an OTP or request a fund transfer.

Let’s quickly explore some real-life cases that depict the level of damage deepfakes can inflict.

Deepfake real-world examples (BFSI-focused)

| # | Case | What Happened | Fraud Type | BFSI Relevance |

| 1 | Bengaluru, 2025 | A 79-year-old woman lost ₹35 lakhs after watching deepfaked videos of N.R. Narayana Murthy endorsing a fake trading platform. False profit dashboards and invented ‘financial managers’ kept her engaged until the money was gone. | Celebrity impersonation + investment fraud | Investment fraud via deepfaked public figures is now a recurring pattern in India. Customers of digital lending and trading platforms are primary targets. |

| 2 | Valueleaf Scam, Mumbai, 2025 | A China-based syndicate ran deepfaked videos of known Indian stock analysts and news anchors as paid ads on Meta platforms between July 1 and 18, 2025. Victims were redirected to WhatsApp groups where scammers posed as financial advisors. Four Valueleaf employees were arrested in October 2025 for knowingly providing advertising access to the syndicate for ₹3 crore. | Deepfake ads + social engineering + mule accounts | Exploited India’s digital advertising infrastructure. The fraud ran on Meta’s credit-based system before detection. |

| 3 | Arup CFO Scam, Hong Kong, 2024 | A finance employee was called into a video conference where the company’s CFO and several senior colleagues appeared on screen. All were AI-generated. The employee authorised transfers totalling USD $25 million (~₹210-230 crore). | Real-time deepfake video conference + BEC | This is the most cited financial deepfake case globally. Indian banks conducting video-based credit approvals, treasury authorisations, or executive sign-offs face the same risk. |

Further, let’s study how the Indian regulatory landscape protects against the deepfake threat.

Legal status of deepfakes in India

Currently, India does not have a standalone AI deepfake law yet. However, several existing statutes already cover the most common forms of deepfake-enabled harm, from identity fraud to non-consensual imagery.

| Law / Section | What it covers |

| IT Act, 2000: Section 66C (Identity theft) | Penalises the fraudulent use of another person’s electronic identity, password, or biometric credentials using any computer resource or communication device. |

| IT Act, 2000: Section 66D (Cheating by Personation) | Covers anyone who cheats by impersonating another person through a computer resource (the most cited provision in video KYC fraud and executive impersonation cases). |

| IT Act, 2000 — Sections 66E, 67, 67A & 67B (Privacy & obscene content) | Section 66E targets non-consensual capture or transmission of private images. Sections 67 and 67A penalise publishing obscene or sexually explicit electronic content. Section 67B specifically addresses child sexual abuse material. |

| BNS, 2023: Section 356 (Defamation) | Covers anyone who makes or publishes a false statement intending to damage a person’s reputation. |

| BNS, 2023: Sections 351 & 77 (Criminal intimidation & privacy) | Section 351 penalises threatening a person with injury to cause alarm or compel action. Section 77 specifically covers capturing or sharing images of a woman in a private act without consent. |

| Digital Personal Data Protection Act, 2023 (DPDP Act) | Governs how personal data (including biometric and facial data collected during KYC) is processed. Penalties up to ₹250 crore per violation. |

On 10 February 2026, MeitY notified India’s IT Amendment Rules 2026, effective 20 February 2026. For the first time, Indian law formally defines synthetic media and places clear, time-bound obligations on platforms.

Significant social media intermediaries must remove flagged deepfake content within 3 hours, and non-consensual intimate content within 2 hours. Platforms that miss these windows risk losing safe harbour protection under Section 79 of the IT Act, meaning they can be held liable as if they created the content themselves.

The threat to KYC & identity verification

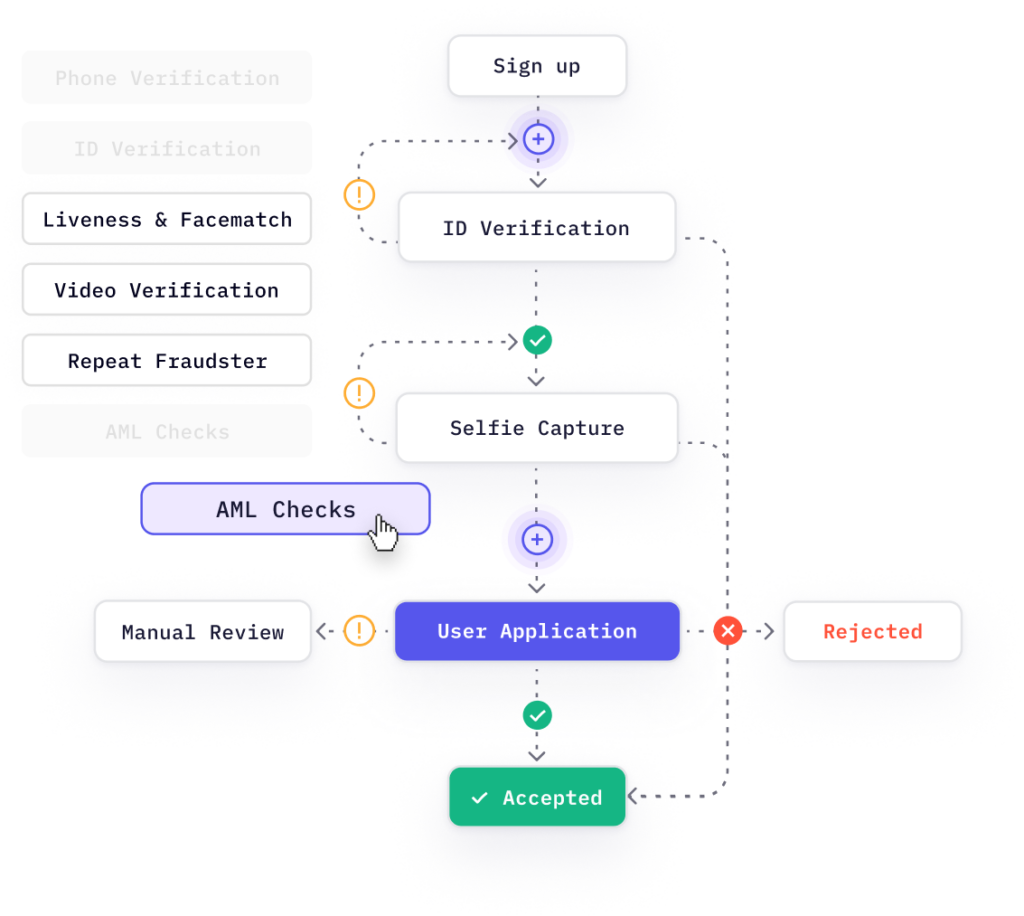

Every financial institution in India that offers digital onboarding, whether through video KYC, selfie liveness, document upload, or biometrics, relies on some form of identity verification.

Deepfakes can compromise each of these layers. Here is how.

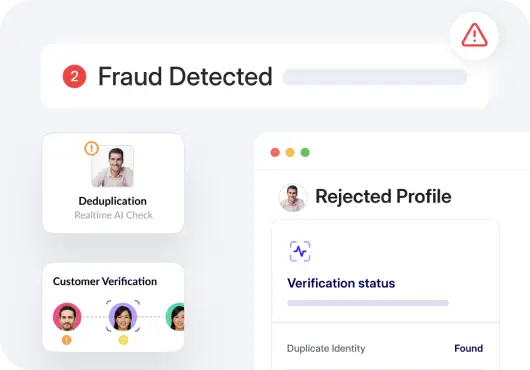

1. Video KYC spoofing

Around 11 lakh video KYC calls take place in India every day, and 86% face spoofing attempts. Fraudsters use real-time face-swap tools to replace their face on screen with a stolen identity during a live call. A bank agent or an automated system sees the wrong person entirely.

2. Injection attacks

More advanced attackers skip the camera entirely. They inject a pre-recorded or synthetically generated video directly into the device’s camera input, so the system receives a fake feed. Standard liveness checks that look for blinks or head movement do not catch this because there’s no real person on the other side at all.

3. Synthetic identity fraud

Fraudsters can use deepfake technology to generate a near-perfect human face that belongs to no living person and pair that with forged documents (acquired through identity theft, for instance)to build a clean synthetic identity: one that passes basic document checks and has no fraud history to flag.

4. Voice cloning for OTP bypass

India saw a 100% spike in voice scam cases between early 2024, rising from 15% to nearly 35% of all reported social engineering fraud. Cloned voices are used to call bank staff or customers: impersonating known contacts, branch managers, or family members to extract OTPs, approve transactions, or redirect fund transfers.

| HyperVerge’s deepfake detection API checks for face liveness, injection signals, and GAN artefacts in real time during onboarding. See how it works. |

How does deepfake detection work?

Almost every AI-generated face leaves behind traces, most invisible to the human eye, but detectable through the right analysis.

Detection systems work by hunting for those traces across multiple dimensions simultaneously.

- Spotting the AI’s fingerprint

Every GAN model leaves a unique mark on the images it produces, a kind of digital fingerprint shaped by its architecture, training data, and internal settings. Detection systems are trained to recognise these fingerprints across different GAN models, even ones they haven’t encountered before.

- Reading frequency patterns

Real photographs and AI-generated images behave differently when broken down into frequency components. Detection systems apply transforms: Fourier, wavelet, and Histogram of Oriented Gradients (HOG) to convert a face image into its underlying frequency data. Deepfakes consistently produce anomalies at this level: ringing, blurring, unnatural edges, and blending artefacts that don’t appear in genuine images.

- Analysing colour channel relationships

In a real photo, the red, green, and blue colour channels maintain a natural correlation, the result of how light interacts with real surfaces like human skin. When a face is synthetically generated or swapped, these correlations break down. Detection systems measure those statistical deviations to flag manipulation.

Why does layering matter?

No single method catches everything. The research underpinning modern detection shows that combining frequency analysis with colour-channel correlation examination consistently outperforms any standalone approach (in some configurations reaching 100% classification accuracy across multiple GAN models).

And besides this, active and passive liveness detection also ensures deepfakes stay at bay. Let’s read more about them below.

Passive vs. active detection: What’s the difference?

When evaluating a deepfake detection or liveness solution, one question matters most: Does it require user action, or does it work in the background?

Active liveness detection prompts the user to blink, turn their head, or read a number aloud. The system verifies whether the response looks genuine. But here’s the problem. Real-time deepfake tools can now mirror these actions in milliseconds. An active challenge is a known prompt that fraudsters can prepare for.

Active checks also add friction to the onboarding journey, which costs conversions in a competitive market.

Passive liveness detection runs silently during the onboarding flow. The user does nothing different. The system analyses the video stream for biological and physical signals of a real, live person. There is no challenge to reverse-engineer.

Passive detection is harder to spoof because the fraudster does not know which signals are being checked. It also creates zero friction for legitimate users.

| Detection method | How it works | Best for |

| Active liveness | Prompts user action (blink, nod, read aloud) and checks if the response is genuine | Basic anti-spoofing; lower-volume or lower-risk onboarding |

| Passive liveness | Silently analyzes blood flow, micro-expressions, and depth during the live session | High-volume digital onboarding where user experience matters |

| GAN artefact detection | Scans pixel patterns for AI-generation fingerprints at the image level | Catching synthetic identity documents and AI-generated face photos |

| Injection attack detection | Analyses camera metadata, sensor noise, and frame timing for artificial feed signals | Defending against real-time face-swap tools during video KYC |

| Multi-layer AI detection | Combines passive liveness + injection + GAN artefact checks simultaneously | Full-stack KYC fraud prevention: the HyperVerge approach |

Deepfake statistics & threat landscape 2026

Deepfakes are no longer a niche cybersecurity risk. In India, they are a front-line fraud tool targeting video KYC, identity onboarding, investment platforms, and executive authorisations every single day.

Take a look at some of these alarming stats:

- Deepfake fraud currently accounts for 40% of all AI-related cybercrimes globally.

- India ranks as the sixth most vulnerable country globally for deepfake-related harm. Meanwhile, 47% of Indian adults have personally experienced or know someone affected by an AI voice clone or deepfake scam, nearly double the global average of 25%. 83% of those victims suffered monetary loss, with almost half losing over ₹50,000.

- 65% of cyber fraud incidents in India go unreported, which means the true scale is far larger than recorded figures suggest.

- India witnesses around 4,000 mule accounts identified daily, many linked to deepfake-enabled fraudulent onboarding.

So, one thing is clear: deepfakes have crossed from a threat-to-watch into a threat-in-progress.

But here’s the good news: detection technology has kept pace. Passive liveness, injection detection, and GAN artefact analysis, applied in real time, give government, businesses, and financial institutions a reliable, frictionless defence.

But the window to act keeps narrowing. With deepfake attacks occurring every five minutes globally, every unprotected onboarding flow is an open target. But not with HyperVerge.

HyperVerge’s deepfake detection solution is built specifically for India’s KYC infrastructure: real-time, API-first, and designed for the volume India demands.