Every digital loan, every new bank account, every KYC approval starts with the same question: is this actually the person they say they are?

Face matching can tell you whether two images belong to the same person. That part is solved. What it can’t tell you is whether the image was captured from a live human sitting in front of a camera right now, or from a photograph someone held up, a video someone replayed, or a deepfake that an AI generated in minutes.

That gap is exactly what liveness detection closes.

This guide covers what liveness detection actually is, how to choose the right approach, how to integrate it via API, what benchmarks to look up to, and what India’s regulatory framework requires in 2026.

What is Liveness Detection?

Liveness detection is the process of verifying that a biometric sample, typically a selfie, was captured from a physically present, live person, not a photograph, screen replay, mask, or AI-generated deepfake.

It sounds like a narrow technical problem. It isn’t. Generative AI tools have made it trivially easy to produce photorealistic synthetic faces and real-time face swaps using nothing more than a few publicly available images. What once required a sophisticated threat actor now takes minutes with off-the-shelf tools. A fraudster sitting in another city can attempt to open a loan account in someone else’s name, with a deepfake good enough to fool a face matching engine.

For organisations doing Video KYC in lending, insurance, payments, and securities, the risk is direct and quantifiable. Without robust liveness detection, a high-quality deepfake can pass face matching with the same confidence score as a real user. The compliance exposure, fraud losses, and reputational damage that follow are entirely avoidable.

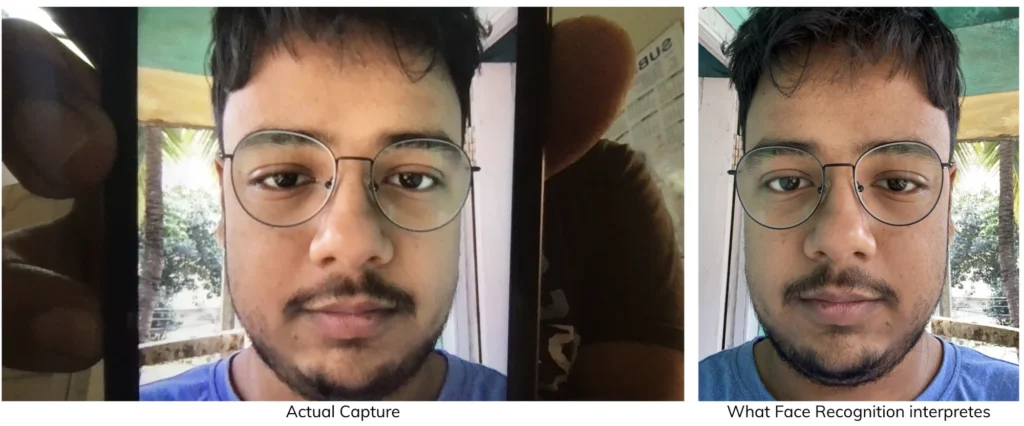

For a face-authentication system without liveness detection, a photograph or any display works just the same as the actual person!

That’s why regulators have moved. The RBI’s Video KYC framework now mandates liveness checks as a baseline for remote identity verification.

Read more: A complete guide to facial recognition

Types of Presentation Attacks in Biometric Systems

Liveness detection systems are specifically designed to defend against presentation attacks (PAD). Common attack categories include:

Print Attacks

Fraudsters present a high-quality printed photograph of a victim to the camera.

Screen Replay Attacks

The attacker displays a victim’s video or image on a mobile device or monitor to simulate live presence.

2D Mask Attacks

Printed masks with cut-out eye or mouth regions are used to trick systems.

3D Silicone Mask Attacks

Realistic 3D masks replicate facial structure and depth.

Deepfake & GAN-Generated Faces

Synthetic identities created using generative AI attempt to pass as real individuals.

Injection Attacks

Manipulation of the camera feed at the software level to inject pre-recorded or synthetic frames.

An effective liveness system must detect anomalies across multiple dimensions: texture, motion, depth, reflection, and pixel-level inconsistencies.

The Threat Landscape: From Printed Photos to AI Deepfakes

While early spoofing attempts relied on printed photographs or screen replays, the threat landscape has evolved significantly.

In 2026 and beyond, fraud techniques include:

- High-resolution screen replay attacks

- 2D and 3D mask attacks

- GAN-generated deepfakes

- Real-time face-swapping overlays

- Camera stream injection attacks

Generative AI tools have lowered the barrier for fraud. Fraudsters can now create synthetic identities or impersonate victims using publicly available images and video footage.

As a result, liveness detection systems must defend against:

- Texture inconsistencies

- Light reflection anomalies

- Micro-movements

- Depth irregularities

- Synthetic pixel patterns

Modern identity verification systems cannot rely solely on face matching. Without robust liveness detection, a high-quality deepfake can bypass traditional recognition engines.

Liveness Detection vs Deepfake Detection

These two capabilities are often bundled together in fraud prevention conversations. They shouldn’t be.

They’re solving adjacent but different problems, and conflating them leads to gaps in your stack.

Think of it this way. A fraudster holding a printed photograph up to a camera during a Video KYC session is a liveness attack. A fraudster submitting an AI-generated face as a selfie for an asynchronous verification check is a deepfake attack. Both are identity fraud. But the detection mechanism, the deployment point, and the technical signals are different.

Liveness detection answers the question: Is this person physically present during this session, right now?

Deepfake detection answers the question: Was this image or video created by an AI?

| Dimension | Liveness Detection | Deepfake Detection |

|---|---|---|

| What it checks | Physical presence during a live session | Whether the image or video is AI-generated |

| Attacks it prevents | Print attacks, screen replays, 2D/3D masks, injection attacks | GAN-generated faces, diffusion model faces, face-swap overlays |

| When it runs | During a live Video KYC or authentication session | On submitted images, documents, or recorded media |

| Signal types | Depth, texture, motion, light reflection | Pixel patterns, frequency artefacts, boundary blending |

| Regulatory mandate | Explicit in RBI V-CIP guidelines | Emerging in newer fraud frameworks |

Where they overlap: advanced passive liveness systems trained on GAN-generated spoof datasets can catch some deepfake attacks. And sophisticated deepfake fraud often includes an attempt to bypass liveness checks simultaneously. But they remain distinct tools for distinct contexts.

In a well-built identity verification stack, liveness and deepfake detection are complementary. Liveness governs the real-time session. Deepfake detection governs the quality of submitted media: ID document images, selfies used in asynchronous flows, video recordings under review.

Running only one while assuming the other is covered is where fraud teams get caught out.

How Does Liveness Detection Work?

Early spoofing attempts were simple. A printed photograph. A photo on a phone screen. The first generation of liveness systems were designed to catch exactly that, and they did it well.

Then the attacks got more sophisticated. High-resolution 4K displays eliminated the pixel artefacts that gave screen replay attacks away. 3D silicone masks replicated facial geometry convincingly enough to defeat depth-sensor-based systems. GAN-generated synthetic faces (and now diffusion model faces) became pixel-perfect and nearly indistinguishable from real captures to the human eye.

And then came real-time face swaps. Applied live, during a video session, on consumer hardware.

The threat in 2026 isn’t the fraudster with a printed photograph. It’s a distributed network of bad actors using generative AI tools that lower the barrier to impersonation to near zero. Any liveness system designed around yesterday’s attacks is already behind.

Effective liveness detection today operates across multiple detection dimensions simultaneously. Single-signal systems are bypassed by attackers who have specifically studied that signal. The defence has to be layered.

Liveness detection systems are classified into two categories based on the input needed from the end-user:

Active Liveness: High Assurance, High Friction

Active liveness asks the user to prove they’re present by doing something. Blink. Turn your head. Say this phrase aloud. The logic is sound: a printed photo can’t blink on command, and a pre-recorded video can’t respond to a real-time challenge.

But the execution creates friction. Users don’t always understand the instructions. Network drops interrupt the video capture. Lighting conditions fail the gesture recognition. Elderly or less digitally fluent users abandon the flow. Companies that have deployed active liveness at scale have reported drop-off rates as high as 50%.

That’s not a small number. In a high-volume lending platform processing hundreds of thousands of applications, it means a material fraction of genuine, creditworthy customers never complete onboarding. Not because they’re fraudsters, but because a gesture prompt confused them at the wrong moment.

Passive Liveness: The Same Selfie Does More Work

Passive liveness requires nothing from the user. The same selfie taken for face matching is quietly analysed for signals that distinguish a live person from a spoof attempt: micro-texture patterns, reflection anomalies, frequency-domain signals from GAN-generated images, depth cues that a flat surface can’t replicate.

The user takes a selfie. That’s it.

Single-image passive liveness extends this further. No video capture, no streaming, no waiting. One image, analysed in milliseconds, produces a liveness determination. That’s why it’s rapidly becoming the default for high-volume onboarding environments, particularly in markets like India, where bandwidth variability in Tier 2 and Tier 3 towns makes video-based flows unreliable.

A single image requires as little as 100–150 KB. A passive video stream requires 2 MB. An active liveness session requires 2–3 MB and a stable connection held for up to a minute. At the scale of hundreds of thousands of daily applications, that difference shows up in onboarding completion rates, support costs, and manual review queues.

Can Passive Liveness Be Spoofed?

Any security system can theoretically be targeted. The key question is whether the system can withstand evolving attack vectors.

Low-quality passive systems may fail against:

- High-resolution screen replays

- Advanced GAN-generated faces

- Partial facial overlays

However, high-quality single-image passive systems trained on diverse spoof datasets and reinforced with multi-signal detection significantly reduce false acceptance rates.

The goal is not only to detect spoof attempts but also to maintain extremely low false rejection rates for genuine users.

Single Image Passive Liveness Detection

A more advanced technique requires the capture of a single image, which is analyzed for an array of complex characteristics to determine if a live person is present.

Single image passive liveness detection is gaining traction among regulators and service providers alike, as it makes user authentication and onboarding very simple while ensuring that services are protected against any spoofing attempts. In the table below, we’ve compared the liveness detection techniques on all attributes.

What Single-Image Passive Liveness Actually Analyses

When HyperVerge’s liveness model processes a single selfie, it isn’t running one check. It’s running several in parallel, looking for the combination of signals that distinguishes a real human from a surface, a screen, or a generated image.

Micro-texture analysis uses convolutional neural networks (CNNs) trained on large-scale spoof datasets, including CelebDF, FaceForensics++, and real-world deployment data, to classify skin texture at the pixel level. Spoofed images carry characteristic frequency-domain signatures that live captures don’t, even when they look identical to the naked eye.

Specular reflection and moiré detection identifies the interference patterns and unnatural light reflections introduced when a camera photographs a screen or a printed surface. These signals are consistent across attack types and robust even against high-resolution attempts.

Depth and shading analysis detects inconsistencies between the 3D facial geometry implied by shading cues and the flat plane of a 2D surface. Software-only systems extract meaningful depth signals from a single RGB image using monocular depth estimation: no specialised hardware required.

Frequency-domain modelling identifies the synthetic signal patterns introduced by GAN and diffusion model image generation. Real human faces, captured on a camera sensor, have a different spectral signature than AI-generated images. This is one of the most reliable signals for catching advanced deepfake attempts.

Injection attack detection operates at the session layer. By validating camera feed integrity and cross-referencing device attestation and session metadata, the system identifies attempts to substitute a synthetic stream for a real camera input: the attack type that bypasses image-layer analysis entirely.

The combined output of these signals feeds into a final classification model, producing a liveness confidence score, a binary pass/fail, and for audit and compliance purposes, frame-level flags documenting which signals were detected.

| Attribute | Single image passive liveness | Video based passive liveness | Active liveness |

| End user effort | Zero effort as the image captured for face recognition is used to detect liveness. ⭐⭐⭐⭐⭐ | Minimal effort as the user has to hold the camera for a period of time while the video is captured. ⭐⭐⭐ | High effort as the user has to respond to challenges in order to prove their live presence. ⭐ |

| Drop-off rates | <1% drop-off is observed, as it’s a simple selfie capture. ⭐⭐⭐⭐⭐ | 3 to 10% drop-off has been observed in typical industry solutions, as users have to hold the camera still for 5-15 seconds. ⭐⭐ | As high as 50% drop-off rates have been reported by companies using active liveness. lack of comprehension and cognitive load on users lead to high abandonment. ❌ |

| User journey time | No latency is added to user journey as same selfie captured for face recognition is used. ⭐⭐⭐⭐⭐ | ~30 seconds of latency is added, including time to capture the video, and backend processing of the video. ⭐⭐⭐ | Highly subjective dependent on the gesture/action used. Typically in the range of of 20 seconds to 1 minute. ⭐⭐ |

| Network Requirements | A single image has to be transferred over the network for evaluation. Images as small as 100kB suffice. ⭐⭐⭐⭐⭐ | Video transmission consumes a lot of bandwidth depending on the length of the video. We have observed an average of 2MB data streams. ⭐⭐ | Highly subjective basis the evaluation technique. Typically, videos of the gesture are transferred, with sizes around 2-3 MB. ⭐⭐ |

| Integration Effort | Requires the integration of a single API to check liveness. ⭐⭐⭐⭐⭐ | Requires a frontend to capture video separately, and stream processing to transmit videos. ⭐⭐ | In most cases, it requires a frontend for explaining the challenge to the users, capturing the challenge video, and stream processing to transmit the video. ⭐ |

For organisations serving customers in semi-urban and rural India, the choice is especially consequential. A liveness system that requires a stable 5-second video stream will fail a meaningful percentage of your user base just because their network won’t cooperate. That’s a business problem disguised as a technical one.

Why Network Efficiency Matters in India

Bandwidth variability remains a real-world constraint in semi-urban and rural India. Video-based liveness systems typically require 2–3 MB of data transmission per session and stable connectivity for 5–15 seconds.

In contrast:

- Single-image passive liveness requires as little as 100–150 KB

- No continuous streaming is required

- Works reliably even in high packet-loss conditions

For digital lenders, banks, gaming platforms, and fintechs serving Tier 2 and Tier 3 markets, minimizing network dependency directly reduces onboarding failures.

Liveness Check in Customer Onboarding

Companies involved in providing financial services such as lending, insurance, investments, wealth management, payments, and others must ensure they perform proper KYC of users before allowing access to services. In addition to it being a compliance requirement, proper identity checks ensure that the company is protected from fraudsters and scammers getting access to its services.

However, subjecting customers to additional verification steps adds friction to the onboarding process and leads to high drop-off rates.

The ideal onboarding workflow verifies the user’s identity and liveness with high confidence while adding little to no friction in the onboarding process.

An ideal passive liveness solution for customer onboarding should have most, if not all of the following characteristics.

Single Capture for Face Liveness Detection

For checking a person’s identity, the user is asked to capture a selfie of themselves to match against their identity card. HyperVerge’s single image liveness check uses this same selfie for liveness detection.

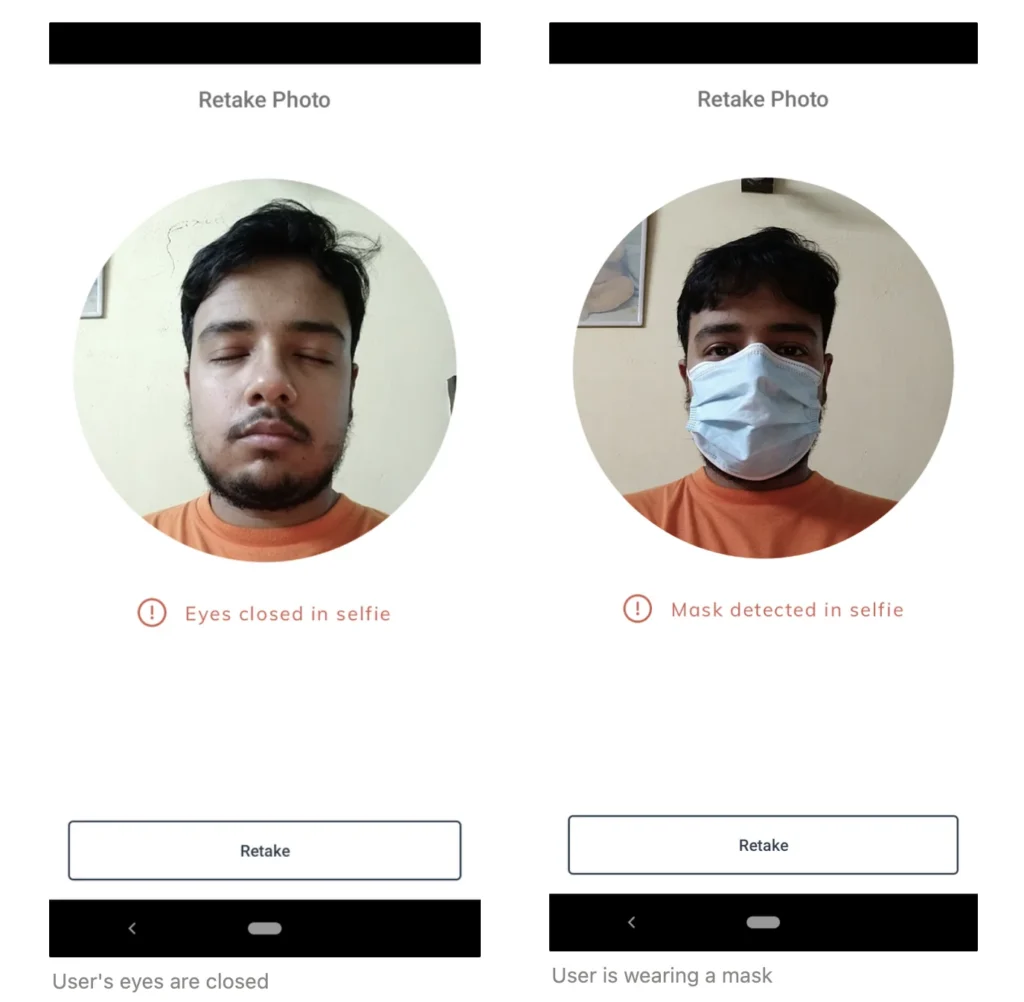

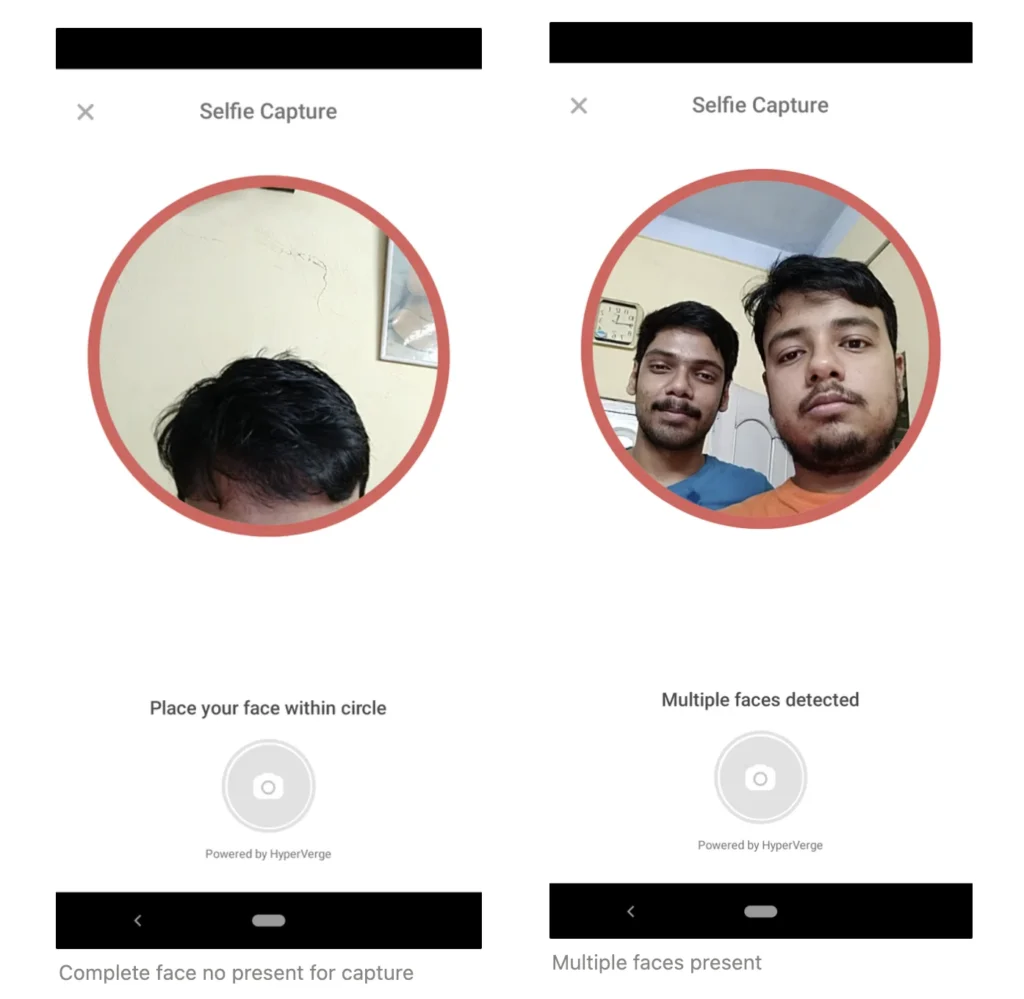

Immediate Feedback and Retries

In case a user captures a non-compliant selfie due to any reason, the workflow should provide immediate feedback to the user, and ask for a re-capture. Proper and immediate feedback ensures the least number of retries for the user, and that onboarding does not require manual verification of images leading to a high turn-around time for completing onboarding.

HyperVerge’s single image comes with an array of compliance checks that ensure that immediate and contextual feedback is given and the user can capture a correct image in the next attempt.

Liveness Check Benchmarks

Active liveness systems traditionally have performed at very high accuracy levels due to a fraudster having to pass difficult challenges. For instance, a fraudster with a digital photograph of the victim would find it very difficult to pass a challenge where the user is required to blink.

Any passive liveness system that replaces an active liveness system must provide on-par performance with traditional active liveness.

Passive liveness systems, while providing numerous benefits, must not compromise on performance and be capable of stopping all typical spoof attacks.

Additionally, passive liveness systems should have the lowest possible false-positive rates, to ensure genuine customers do not face any friction in the onboarding process.

HyperVerge’s single-image passive liveness system has been rigorously tested on industry-standard benchmarks and has provided ~99.8% accurate predictions in live environments.

| Liveness Detection Benchmark | Accuracy (%) |

| Live detected as live (True Positive Rate) | 99.2 |

| Live detected as non-live (False Rejection Rate) | 0.8 |

| Non-live detected as live (False Acceptance Rate) | 0.2 |

| Non-live detected as non-live (True Negative Rate) | 99.8 |

What Scale Does to These Numbers

At 1 million verifications per month:

- A 0.8% False Rejection Rate means approximately 8,000 genuine users experience unnecessary friction every month

- A 0.2% False Acceptance Rate means approximately 2,000 potential fraud attempts pass through

Neither number is abstract at that volume. The false rejections show up in support ticket queues, in churn, in onboarding completion rates. The false acceptances show up in fraud losses, compliance audits, and if the trend continues, regulatory scrutiny.

The goal is a system that minimises both simultaneously. That’s the engineering problem HyperVerge’s single-image passive liveness system is built to solve.

Benchmark Context & Testing Methodology

HyperVerge’s single-image passive liveness benchmarks are evaluated across:

- Large-scale real-world deployment environments

- Diverse lighting conditions

- Multiple device types

- Structured spoof datasets including print, replay, and mask attacks

Testing methodologies align with industry-standard PAD evaluation frameworks similar to ISO/IEC 30107-3 approaches.

Results demonstrate:

- High true positive detection rates

- Extremely low false acceptance rates

- Minimal friction for genuine users

Additionally, our liveness detection systems are reinforced for popular techniques used by fraudsters such as digital photographs, printed images, printed masks, etc to ensure maximum protection.

We firmly believe single-image liveness is the best way forward for organizations looking to scale their business. The system provides

- very low drop-off rates

- serviceability in areas with low network coverage

- low manual operations costs

- very minimal turnaround time

All this comes at no compromise in protection against identity theft and fraud!

Implementation Guide: API Integration

The implementation is simpler than the underlying technology suggests. That’s intentional.

Liveness detection should disappear into your existing verification flow, not create a new one. The goal is that your user takes a selfie, your system gets a liveness determination, and the onboarding continues with no additional step visible to the user, and minimal additional complexity for your engineering team.

HyperVerge’s single-image liveness API is designed around this principle. One API call, added to the face verification flow you already have.

- SDK Initialisation

└── Initialise HyperVerge SDK with your App ID and App Key - Session Creation

└── Create a new verification session

└── Receive session token for request authentication - Image Capture (Client-Side)

└── User captures selfie via your existing UI

└── SDK enforces compliance checks (lighting, framing, blur)

└── Immediate contextual feedback triggers re-capture if needed - Liveness Check (API Call)

└── POST /v1/liveness with captured image

└── Returns liveness score, pass/fail, and frame-level flags - Result Handling

└── PASS → continue to face match / downstream KYC steps

└── FAIL → surface a user-friendly retry prompt with guidance

└── Log confidence score and session metadata - Compliance Logging

└── Store session ID, timestamp, liveness score, device metadata

└── Required for RBI Video KYC audit trails

RBI & Regulatory Compliance Checklist

Compliance frameworks exist for a reason. In the context of Video KYC, they’re the baseline assurance that the person receiving a loan, opening an account, or buying insurance is who they claim to be. Liveness detection is a core part of how that assurance is established.

Here’s what India’s regulatory framework actually requires and what it means in practice.

RBI Video KYC Circular

The Reserve Bank of India’s guidelines for Video-based Customer Identification Process (V-CIP) establish that:

[ ] The video session must be live and interactive, not pre-recorded

[ ] The system must include liveness checks to confirm the customer is physically present in real time

[ ] A random element (a question, an instruction) may be used to confirm live presence (passive liveness with session integrity validation is increasingly accepted as equivalent)

[ ] The full session must be recorded and stored for audit

[ ] Geolocation and device metadata must be captured and logged per session

UIDAI Aadhaar-Based Video KYC

For Aadhaar-based Video KYC, liveness detection must satisfy UIDAI’s presentation attack detection requirements: resistance to print attacks and replay attacks at minimum. Passive liveness systems tested against ISO/IEC 30107-3 evaluation methodology meet this threshold.

Is Passive Liveness Alone RBI Compliant?

Yes, with conditions:

[ ] Liveness is performed during a live, real-time session (not on pre-recorded media)

[ ] The session includes at least one real-time interaction element

[ ] Liveness confidence scores are logged per session, with timestamp and session ID

[ ] The system demonstrates resistance to print, replay, and deepfake attacks

[ ] Device metadata and geolocation are captured per RBI data retention requirements

Compliance Framework Summary

| Framework | What It Requires | HyperVerge Coverage |

|---|---|---|

| ISO/IEC 30107-3 | Presentation attack detection testing standard | Methodology-aligned evaluation |

| RBI V-CIP Circular | Live liveness check in Video KYC sessions | Supported |

| DPDP Act (India) | Lawful biometric processing with explicit consent | Consent capture configurable |

| AML/KYC obligations | Prevent impersonation and identity fraud | Core use case |

| GDPR | Biometric data classified as sensitive personal data | Applicable for global operators |

Regulatory requirements aren’t static. The frameworks above will continue to evolve as synthetic identity fraud grows. Building your liveness implementation on a platform that actively tracks and responds to these changes, rather than treating compliance as a checkbox, is the more durable approach.

HyperVerge powers identity verification for Reliance Jio, Vodafone, Aditya Birla Capital, L&T Financial, ICICI Securities, Angel Broking, Groww, Razorpay, Swiggy, and hundreds of other organisations across lending, securities, payments, telecom, and e-commerce. Millions of users are onboarded every month with minimal friction and without compromising protection against fraud.

Ready to implement liveness detection? Check out our liveness detection solution and to speak to one of our solution experts, reach out to us here.